What you will learn

Over the next few minutes, you’ll see why “carbon math” has moved from sustainability reports into the core of engineering accountability. Regulatory pressure, board-level ESG targets, and explosive cloud scale are converging on a single expectation: prove the impact of every workload. We will break down the new reporting frameworks, translate kilowatt-hours into familiar DevOps metrics, and show where cloud computing, cloud security, and cloud compliance intersect with low-carbon delivery in real production environments. A short, data-backed case study turns theory into numbers, while a featured snippet distills the essentials into a share-ready checklist for time-pressed technical and executive stakeholders.

The 2026 regulation cliff is real

The Corporate Sustainability Reporting Directive (CSRD) in Europe and the SEC’s Climate Disclosure Rules in the United States both reach full enforcement in 2026. Large enterprises must publish third-party-audited scope 3 emissions, which includes the electricity consumed by public clouds and on-prem workloads.

Public cloud growth amplifies the challenge. End-user spending is on track to hit $723.4 billion in 2025 and Gartner expects a 21.5% jump in 2025. More workloads mean more electricity and, unless efficiency rises, more carbon.

Hybrid architectures complicate tracking. Gartner projects that 90% of organizations will run hybrid cloud by 2027. Each additional region, provider, or on-prem cluster introduces another data source that auditors will expect to see in a carbon ledger.

The takeaway: compliance teams will knock on the engineering door asking for emission telemetry that is as granular as a Kubernetes pod. That is impossible without automating collection and translating resource data into carbon estimates. To prepare for this complexity, companies are turning to new strategies. For a perspective on the rapid change and how to stay compliant when rules change faster than code, see What Makes ‘Cloud Technologies’ Different in 2025?.

FinOps and GreenOps: two sides of the same coin

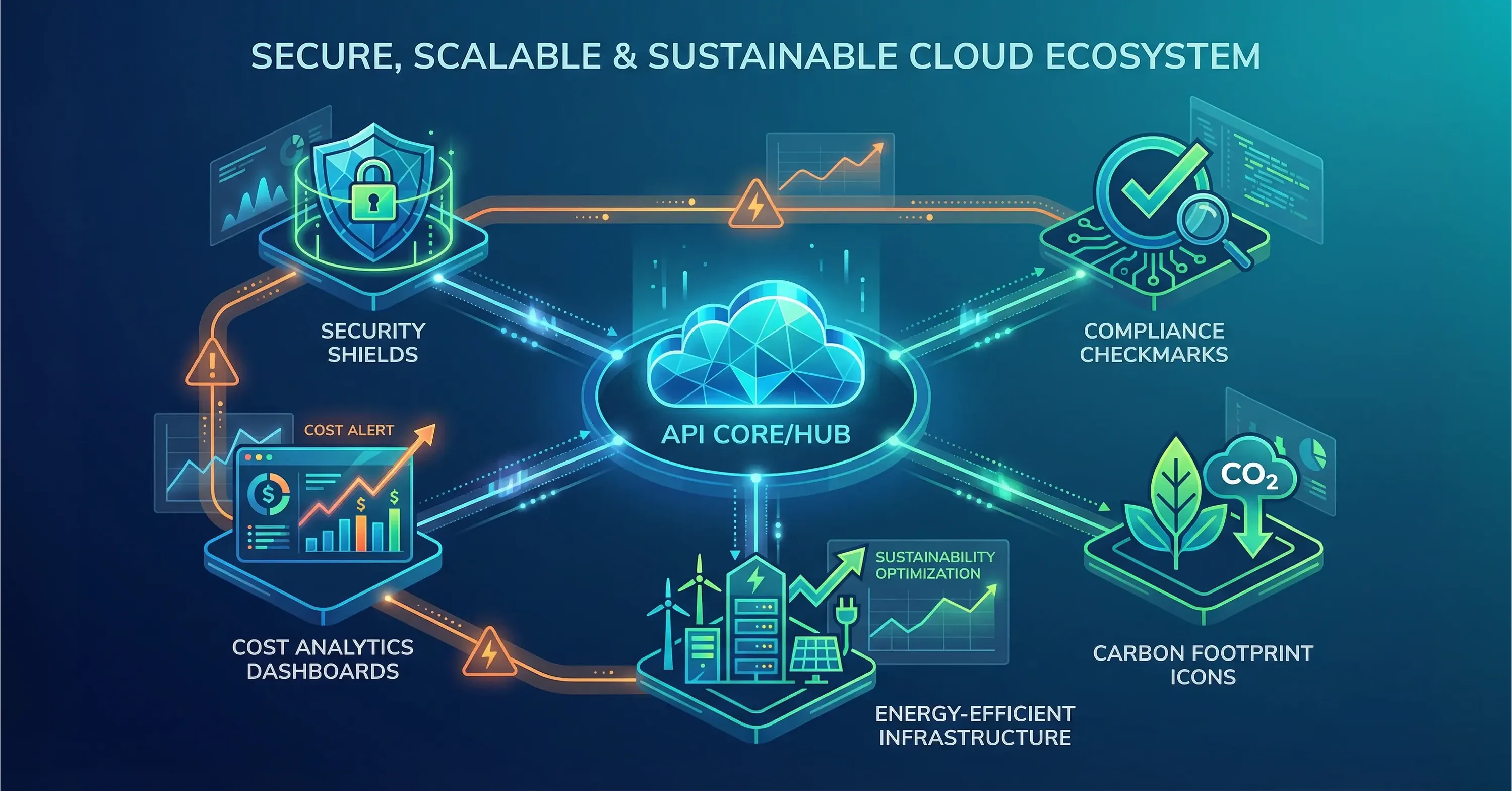

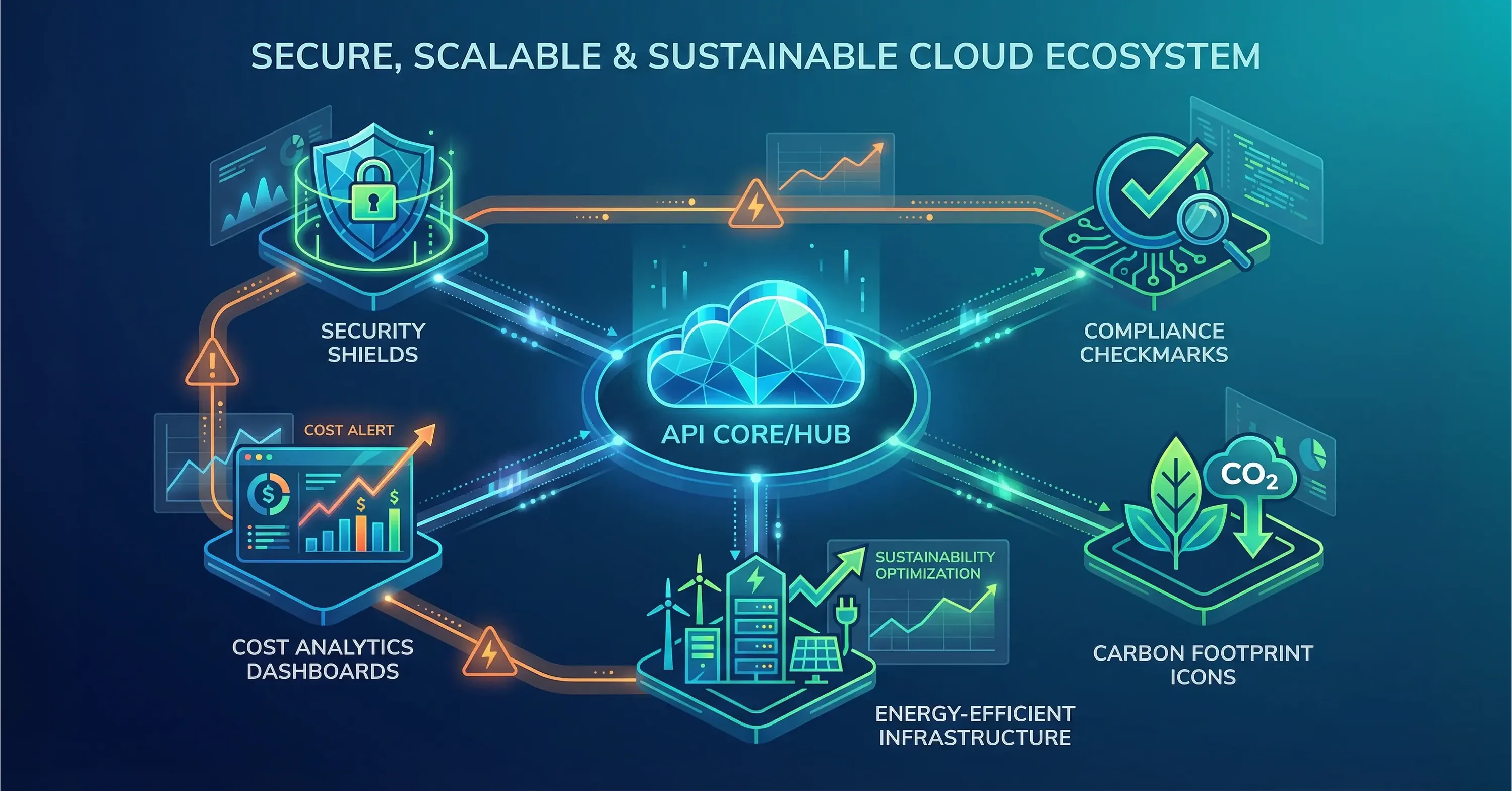

FinOps already tells engineers to tag resources, compare budgets with actual spend, and eliminate idle capacity. GreenOps reuses that telemetry but adds a carbon price.

Both disciplines depend on identical data streams:

-

Resource metadata: instance ID, region, size

-

Usage metrics: CPU hours, GB-months, IOPS

-

Business context: cost center, product team, customer value

Adding emission factors - grams of CO₂ per kWh for each region -turns cost reports into carbon reports. That is why mature FinOps platforms often serve as the starting point for GreenOps dashboards. For a breakdown of how FinOps enables both spending visibility and emission tracking, explore The Cloud Cost Paradox: Why Migration Spikes Your Budget - And How a FinOps Solutions System Fixes It.

Ending this section, remember that any cost-saving action is likely a carbon-saving action, which makes finance teams powerful allies in the sustainability push.

How Smarter Workload Tuning Cut Cloud Costs by 18% and Emissions by 22%

A US media company tuned its video transcoding jobs. Dropping unnecessary 4K previews reduced EC2 costs by 18% and cut emissions by 22% because the workload ran in a coal-heavy region.

Building a carbon-aware pipeline

A DevOps pipeline that surfaces cost and carbon signals at every stage allows engineers to correct waste before code reaches production.

Key ingredients:

-

Observation: export usage data from cloud APIs and on-prem meters into a time-series store

-

Conversion: multiply kWh by regional emission factors from trusted databases such as the European Environment Agency

-

Feedback: expose a pull-request comment or Slack alert showing projected cost and CO₂ impact

-

Guardrails: deny deployments that exceed a budget or carbon threshold

-

Continuous review: retrospective meetings that compare planned versus actual numbers

Tools matter but culture matters more. Developers must see carbon metrics as first-class quality gates, similar to unit tests or CVE scans.

Closing this topic, embedding carbon checks into CI/CD turns sustainability into an everyday engineering concern rather than an annual compliance scramble. For actionable steps and workflow ideas, see Tech-Driven DevOps: How Automation is Changing Deployment.

Where cloud security and cloud compliance meet GreenOps

Security, compliance, and sustainability share a single truth: you cannot manage what you cannot measure. Cloud breaches also carry an energy price. When IBM pegged the average breach cost at $4.88 million in 2024, remediation often included urgent re-imaging of thousands of virtual machines, adding unplanned compute hours and emissions.

A fragmented toolset makes both security and carbon accounting harder. IDC found organizations juggle 10 different cloud security tools on average and that 97% want to consolidate. Unified platforms cut alert fatigue, reduce redundant scans, and therefore lower energy use. For comprehensive approaches, including cloud managed security strategies, check out Cloud Managed Security: Unified Security Strategy for Cloud and Hybrid Enviroinments.

Integrating cloud computing cloud security events with FinOps data can surface hidden costs:

-

Extra encryption cycles from poorly tuned IAM policies

-

Redundant backups triggered by false-positive compliance alerts

-

Over-provisioned bastion hosts kept alive for audits

FinOps dashboards already ingest billing APIs; adding security-driven resource spikes is a small leap. The result is clearer insight for both CFOs and CSOs.

A pragmatic roadmap for CTOs and architects

Moving from intention to execution involves staged milestones that fit within typical budget cycles.

-

Next 3 months: establish a cross-functional GreenOps squad, audit current telemetry, pick one emission factor library

-

6 months: integrate carbon metrics into FinOps reports, tag 80 % of resources with environment and owner labels

-

12 months: embed carbon gates in CI/CD, pilot workload shifting to low-carbon regions

-

18 months: publish internal carbon dashboards, include emission OKRs in team scorecards

-

24 months: produce auditor-ready scope 3 reports, align with CSRD and SEC formats

A leading provider of managed IT services can accelerate this roadmap by supplying managed collectors, security consolidation, and expert guidance, letting in-house engineers focus on product features rather than plumbing. See how such partnerships drive ongoing growth in How Managed IT Services Empower Business Growth. The earlier you start, the less painful the 2026 reporting season will be.

How FinOps Tagging Automation Cut Project Time by 60%

One SaaS vendor hired a managed services partner to retrofit tagging scripts across Azure and GCP. The project wrapped in four weeks, compared with the estimated ten had it relied solely on internal staff.

Carbon-aware DevOps in one minute

Carbon-aware DevOps is the practice of measuring energy use and related emissions for every cloud and on-prem workload, feeding those numbers back into the software delivery pipeline, and gating releases on both cost and carbon thresholds. It reuses FinOps telemetry, adds regional emission factors, and creates a single report that satisfies finance, security, and sustainability auditors.

Conclusion

Carbon reporting is no longer a voluntary ESG narrative; it is a regulated requirement arriving in 2026. The smartest way to prepare is to extend existing FinOps muscle into GreenOps, embed carbon metrics inside DevOps workflows, and link cloud computing and cloud security events - including data protection controls and incident responses - to unified cost-and-carbon dashboards. Start early, automate heavily, and the next audit will read like a routine sprint review rather than a crisis meeting.