Phase I: The Tactical Cleanup and Immediate Waste Reduction

The first step in any efficiency journey is stopping the hemorrhage of funds caused by zombie resources. These are assets that you pay for but do not use. This phase is reactive but necessary. It provides the initial budget relief required to fund longer-term strategic changes.

Visibility is the primary hurdle here. You cannot fix what you cannot see. Many teams launch instances for testing and forget to terminate them, leading to "sprawl." Recent data highlights the severity of this issue, showing that 84% of organizations identify managing cloud spend as their top challenge. Without a clear audit, these hidden costs compound monthly.

To begin this phase, focus on these tactical actions:

-

Identify Idle Resources: Look for virtual machines (VMs) with CPU utilization below 5% for the last 30 days.

-

Delete Unattached Storage: Remove storage volumes that persist after their parent instances have been terminated.

-

Release Unused IP Addresses: Elastic IPs that are not attached to a running instance often incur hourly charges.

-

Audit Snapshots: Old backups often sit in storage for years; implement a lifecycle policy to archive or delete them.

This waste is not negligible. In fact, 31% of IT leaders say over 50% of their cloud spending is wasted. By addressing these low-hanging fruits, you instantly lower your monthly bill without touching your production architecture.

For a practical, step-by-step blueprint on rapid rightsizing, idle resource audits, and how simple scheduling can capture quick savings - review Cloud Cost Optimization: How to Cut Costs and Improve Cloud Performance.

Eliminating Zombie Environments

A mid-sized fintech company noticed their cloud bill rising by 15% month-over-month despite steady traffic. Upon audit, they discovered over 400 development environments running on weekends and holidays. By implementing a simple script to shut down non-production environments at 7:00 PM on Fridays and spin them back up at 7:00 AM on Mondays, they reduced their compute spend by nearly 30% immediately.

Once you have stopped the bleeding, you must ensure it does not return. This requires moving from manual cleanup to automated governance.

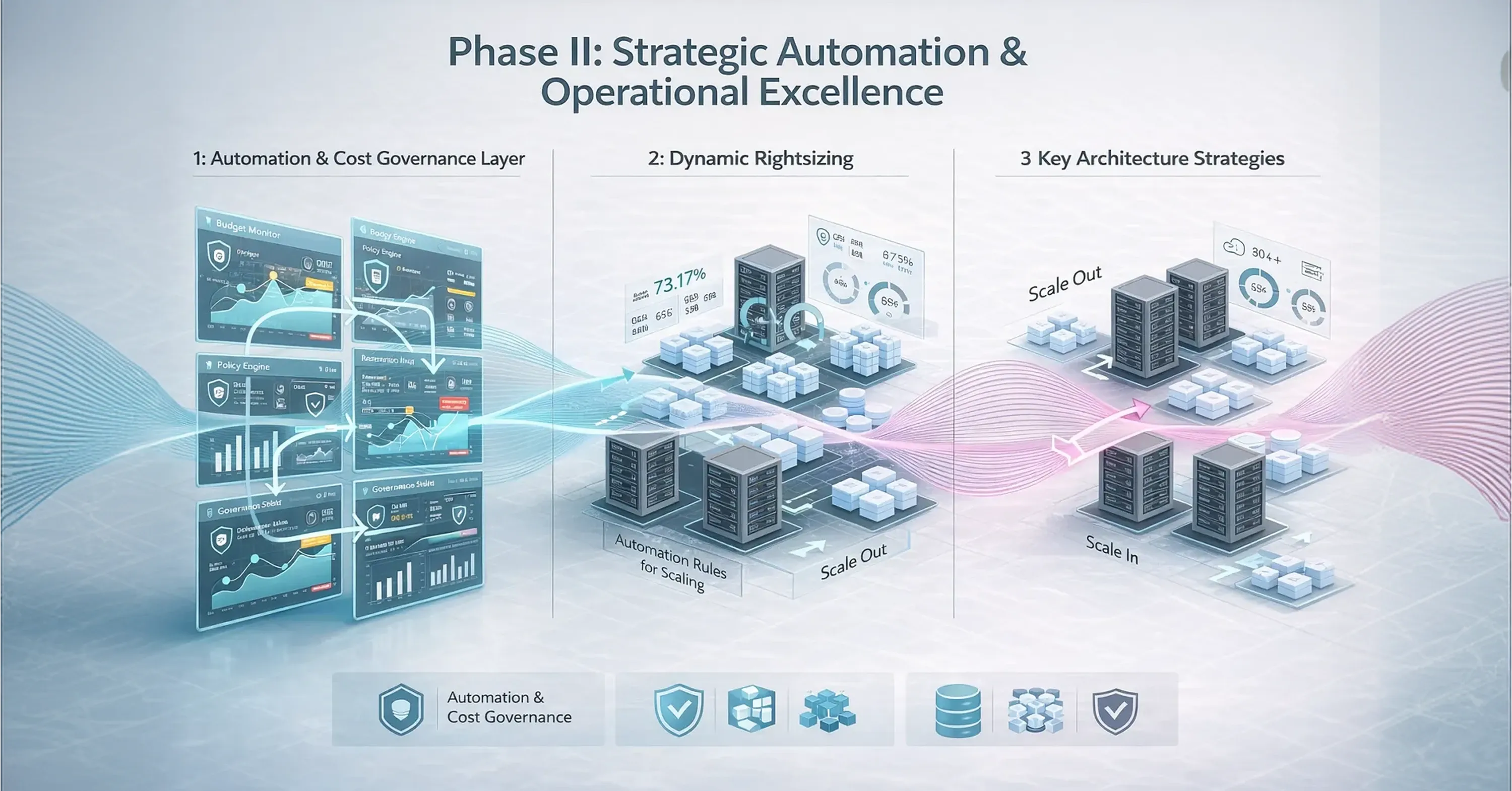

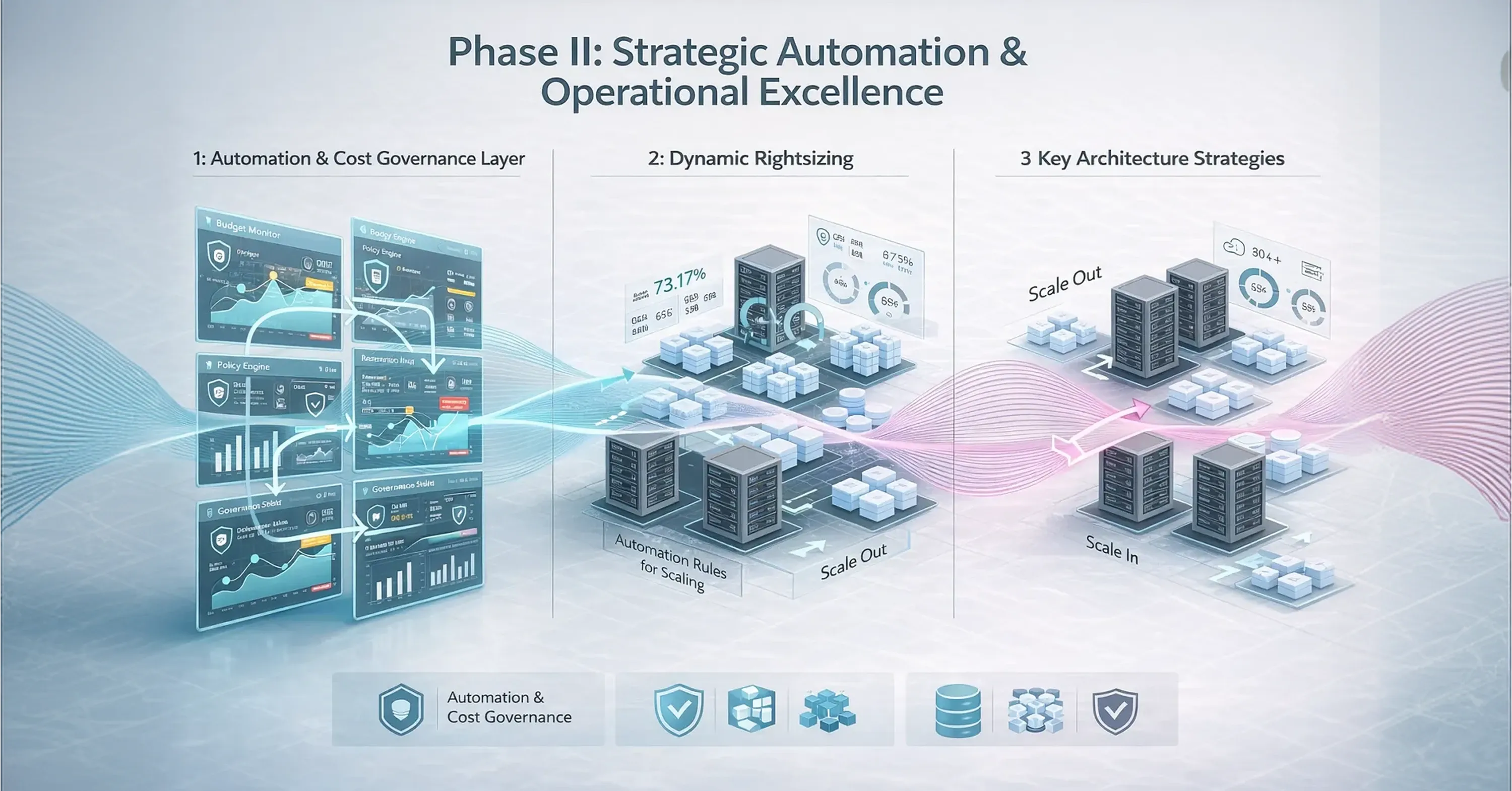

Phase II: Strategic Automation and Operational Excellence

Manual intervention is not scalable. As your infrastructure grows, the human effort required to manage costs becomes too high. Phase II focuses on proactive management using cloud cost management tools and automation. This is where you shift from asking "what can we delete?" to "how can we run this more efficiently?"

Operational excellence in the cloud means paying only for what you need, exactly when you need it. This involves rightsizing, which is the process of matching instance types to workload performance requirements. However, in 2026, rightsizing must be dynamic. Workloads fluctuate, and your infrastructure should breathe in and out with demand.

Key strategies for this phase include:

-

Implement Auto-Scaling: Ensure groups of servers expand during peak traffic and shrink during lulls.

-

Leverage Spot Instances: Use spare cloud capacity for fault-tolerant workloads (like batch processing) at steep discounts of up to 90%.

-

Commitment-Based Discounts: Purchase Reserved Instances or Savings Plans for steady-state workloads, typically saving 30-50% over on-demand rates.

-

Infrastructure as Code (IaC): Define all resources in code (e.g., Terraform) to prevent unauthorized "click-ops" deployments that bypass budget checks.

The market acknowledges this shift toward automation, as the global cloud cost management tools market was valued at $9.8 billion in 2024. These tools provide the necessary governance to prevent cost overruns before they happen.

For a broader blueprint of what a next-gen cloud platform looks like and how continuous automation and cost control converge, refer to Be Cloud: The Next-Gen Platform for Scalable Business.

Dynamic Scaling for E-Commerce

An online retailer faced massive server costs to support Black Friday traffic but utilized only 10% of that capacity during regular months. They moved to a containerized architecture managed by Kubernetes with aggressive auto-scaling policies. They also utilized Spot instances for their image processing queues. This strategy allowed them to handle 28% projected increases in cloud spending without blowing their budget, ensuring high performance only during the milliseconds it was actually required.

With automation in place, the final step is to align your engineering architecture directly with your business model.

Phase III: Structural Modernization and FinOps Culture

The most mature stage of cloud optimization is structural. This is where you stop treating the cloud like a data center and start using cloud-native patterns. It involves a cultural shift toward FinOps, where engineering, finance, and product teams collaborate on spending decisions. The goal here is to reduce cloud costs per unit of value delivered.

For insight into how FinOps reframes cloud cost management -shifting from simple cost cutting to a focus on unit economics and continuous, feedback-driven improvement - explore The Cloud Cost Paradox: Why Migration Spikes Your Budget - And How a FinOps Solutions System Fixes It.

In this phase, you focus on Unit Economics. Instead of looking at total spend, you measure "cost per transaction" or "cost per customer." If your cloud bill goes up, it should be because your revenue went up. If your bill rises while revenue stays flat, you have an architectural problem.

Modernization strategies for long-term value include:

-

Serverless Adoption: Move to event-driven architectures (like Lambda or FaaS) where you pay only for the milliseconds code runs, eliminating idle time entirely.

-

Managed Services: Replace self-hosted databases with managed versions to reduce operational overhead.

-

Multi-Tier Storage: Automatically move rarely accessed data to "cold" storage classes that cost pennies per gigabyte.

-

Tagging Strategy: Enforce strict tagging policies to allocate every dollar to a specific team, product, or feature.

For more on cloud-native modernization, orchestration, and leveraging containers and serverless to accelerate transformation, consult Top Cloud Sources Every Business Should Know.

This structural shift is critical as AI and heavy compute loads grow. Analysts predict that worldwide end-user spending on public cloud services will total $723.4 billion in 2025. To survive this growth, companies must ensure their architecture is cost-efficient by design. A leading provider of managed IT services can often assist in navigating these complex architectural migrations, ensuring security and efficiency are built in from the start.

AI Workloads: The New Cloud Cost Frontier

One of the fastest-growing drivers of cloud spend in 2026 is artificial intelligence infrastructure. Training and running large models requires specialized GPU clusters that can cost dozens of times more than traditional compute. Unlike web workloads, AI systems often run continuously, making idle capacity extremely expensive.

Organizations must shift from thinking about “cost per server” to “cost per inference” or “cost per model output.” Techniques such as model distillation, batching requests, caching frequent responses, and scheduling training jobs during off-peak pricing windows can dramatically reduce expenses without affecting user experience.

Without architectural optimization, AI features can quickly become loss leaders, where each user interaction costs more to serve than it generates in revenue. Treating AI as a unit-economic problem - not just a technical one - is essential for sustainable adoption.

Refactoring for Unit Economics

A SaaS data platform realized their "cost per query" was higher than the price they charged customers on their basic tier. Their monolithic architecture required spinning up a massive server for even tiny user requests. By refactoring their application into microservices and using serverless functions for small queries, they aligned their infrastructure cost with the request size. This reduced their cost per transaction by 65%, turning a loss-leading product into a profitable one.

Addressing these structural issues helps organizations avoid the common pitfall of exceeding their cloud budgets by an average of 17%.

The Cloud Maturity Matrix

To understand where you stand, consider the Cloud Maturity Matrix. This helps you identify if you are merely reacting to bills or actively driving value.

1. Reactive (The "Bill Shock" Stage): You act only when the bill is too high. You lack visibility into who is spending what. Your primary metric is "Total Monthly Spend."

2. Proactive (The Automation Stage): You use auto-scaling and hold regular review meetings. You have purchased Savings Plans. Your primary metric is "Resource Utilization" (e.g., CPU %).

3. Optimized (The Value Stage): Your architecture scales to zero when not in use. Engineering teams see cost impact in real-time. Your primary metric is "Unit Cost" (e.g., Cost per API Call).

To implement these pillars and unify governance across multi-cloud environments, find expert guidance at Managed IT Services.

Moving to the "Optimized" stage usually requires external expertise or a dedicated internal team. A leading provider of managed IT services can accelerate this journey by deploying proven frameworks and proprietary tools that bridge the gap between engineering and finance.

Conclusion

Optimizing the cloud is not a one-time project; it is a continuous operational discipline. By following the three-phase approach - cleaning up waste, automating operations, and modernizing architecture - you can regain control of your budget. As the market grows and cloud spending is projected to increase by 28% over the next year, the companies that thrive will be those that treat cloud efficiency as a core engineering feature, not just a financial necessity.

To dive deeper into continuous improvement, unit economics, and the feedback loops that keep cloud costs aligned with business value, see Cloud Cost Optimization: How to Cut Costs and Improve Cloud Performance.

Start with visibility, move to automation, and aim for a culture where every engineer understands the value of the resources they consume.