Overview

First you will learn why visibility is the foundation of any cloud cost program. Next we will walk through five tightly connected steps: automated tagging and showback, workload right-sizing, spinning non-production environments to zero, modernizing architecture, and eradicating silent storage waste. Each step includes guardrails you can automate, plus a short real-world example so the concepts stick. By the end you will know exactly how to embed continuous cloud optimisation into daily engineering workflows and make every dollar work harder.

Make Cost Visible With Tagging and Showback Dashboards

In most organizations cloud invoices arrive as giant CSV files no one understands. Before you can optimize anything, you need a clear map of who spent what, where, and why.

Start by mandating tags on every resource the moment it is created.

Tags should identify at least:

-

Application or service name

-

Environment (dev, test, prod)

-

Owner (team or individual)

-

Cost center or business unit

Use your cloud provider’s policies or third-party IaC hooks so untagged resources fail deployment. Pair the tags with showback dashboards - simple reports that surface spend per team each morning inside Slack or Microsoft Teams. The data lands directly in front of the engineers generating the cost, turning an abstract finance issue into a tangible engineering metric.

Blocking oversized instance types in development and staging is another quick win. A rule in AWS Service Control Policies or Google Cloud Organization Policy can prevent anyone from launching 64-core monsters where a t3.small is enough.

When teams see their numbers and bump up against guardrails, they fix issues early, long before Finance uncovers them during quarter-end scramble.

For practical pros and cons of automated tagging and near-real-time dashboards - including why 80% of organizations still struggle with data integration between clouds and finance - see The Cloud Cost Paradox: Why Migration Spikes Your Budget - And How a FinOps Solutions System Fixes It.

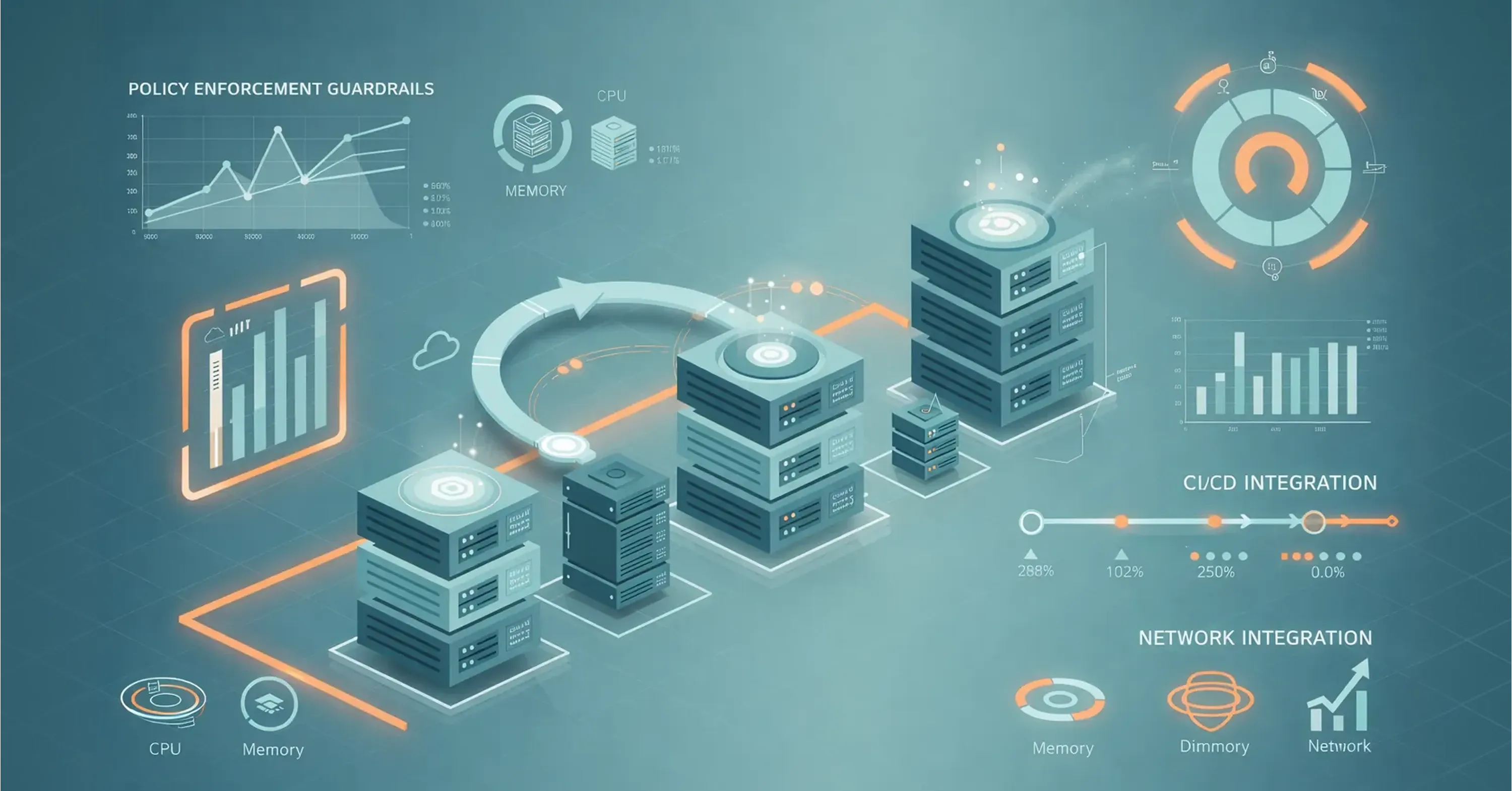

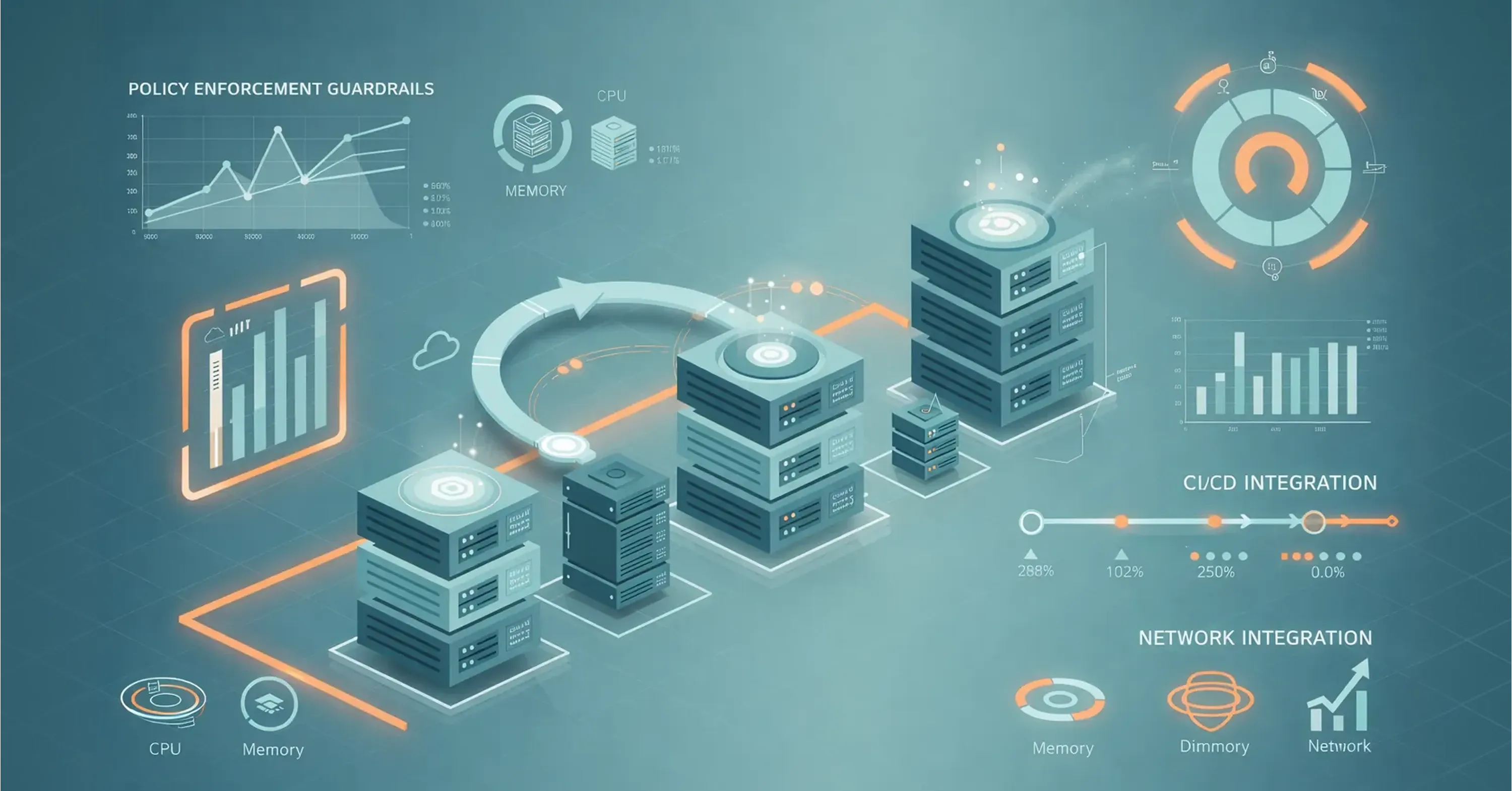

Right-Size Workloads and Enforce Automatic Guardrails

Once visibility is in place, the next leap in optimization cloud computing is simply running your workloads on smaller, cheaper instances that still meet performance goals. Sounds obvious, yet enterprises still pay 35% more than they need, as highlighted by KPMG research.

Begin by analyzing CPU, memory, and network utilization over at least 30 days. Look for sustained usage below 40%; that often signals an instance is two sizes too large.

Then automate:

-

Scheduled rightsizing recommendations piped to pull requests so engineers approve changes alongside code.

-

Rebalancing to modern instance families that cost less per vCPU.

-

Policies that prevent launching anything larger than a certain size in dev, test, or sandbox accounts.

Finish with performance tests that validate no latency spike. Keeping these steps in your CI pipeline means they run every sprint, not once a year.

For a blueprint on rapid rightsizing, idle resource audits, and how scheduling alone can capture rapid 20–30% cost savings, study The Cloud Cost Paradox: Why Migration Spikes Your Budget - And How a FinOps Solutions System Fixes It.

Rightsizing in the Real World

An e-commerce retailer configured AWS Compute Optimizer to send rightsizing recommendations to Jira. Developers accepted 80% of the suggestions during backlog grooming, shrinking average instance size from m5.large to t4g.medium and saving $240k annually without a single customer report of slower page loads.

With hot paths right-sized, we can tackle the next major lever: turning things completely off when no one is using them.

Spin Non-Production Environments Down to Zero

Development and staging clusters often run 24/7 even though no engineer works at 3 a.m. The fix is aggressively simple: shut them down when idle. Teams that automate this slash development cloud spend by up to 70%.

Start by:

-

Defining working hours, for example 7 a.m.–7 p.m. local time, Monday to Friday.

-

Using serverless schedules or managed services like AWS Instance Scheduler to stop compute at night and start it again before the next workday.

-

Parking entire Kubernetes namespaces with scripts that save manifests, delete pods, and redeploy on demand.

Include “on-demand override” buttons so night-shift engineers can extend runtime with a single click. That small convenience removes the last excuse for leaving clusters on.

Teams looking to cut waste quickly often pair schedule automation with Cloud Services and DevOps - achieving faster results and reinvesting the savings in innovation.

Modernize: From Lift-and-Shift to Microservices and Containers

Many migrations copy on-prem bloat straight into the cloud. Monolithic stacks keep resources pegged even when traffic is quiet, undoing the very elasticity you are paying for. Microservices, containers, and serverless functions sidestep that trap because they natively scale to usage.

Kick-off modernization with:

-

Containerizing stateless parts of the monolith first; Kubernetes or managed ECS/EKS offers autoscaling out of the box.

-

Breaking the application into discrete services that can scale independently.

-

Moving infrequently used endpoints to event-driven serverless functions where you pay per invocation.

Measure success by tracking cost per thousand requests. If the number flat-lines while traffic climbs, you have achieved smart scaling.

For more context on cloud-native modernization, orchestration, and leveraging containers and serverless for velocity, see Top Cloud Sources Every Business Should Know.

Serverless Cost Optimization in Practice

A ticketing platform shifted its user authentication module from a VM to AWS Lambda. The module received bursts during ticket drops and almost zero traffic otherwise. The move cut monthly compute for that component from $18k to $1.2k, with end-to-end latency unchanged.

Microservices help, yet hidden storage leftovers will still drain money unless you actively hunt them.

Pay Less per Unit: Spot Instances, Reserved Capacity, and Cost Metrics

Cloud cost optimization is not only about using fewer resources, but also about paying a lower rate for the resources you still need. Once workloads are modernized and right-sized, pricing models become a powerful next lever.

Spot Instances (or Preemptible VMs in GCP) allow teams to run interruptible workloads - such as CI pipelines, batch processing, analytics, or ML training - at discounts of up to 90% compared to on-demand pricing, depending on provider, region, and instance type.

For implementation patterns and common pitfalls, see The Cloud Cost Paradox: Why Migration Spikes Your Budget – And How a FinOps Solutions System Fixes It.

For predictable, always-on workloads, Reserved Instances and Savings Plans enable long-term discounts of up to 72% in exchange for 1–3 year commitments. Mature FinOps teams commit only to baseline demand while keeping variable traffic flexible. This layered approach is commonly applied within modern cloud operating models described in Cloud Services and DevOps.

At this stage, leading organizations shift the conversation with Finance from total cloud spend to cost per unit. Instead of asking “Why are we spending more?”, they track metrics such as Cost per Transaction (fintech) or Cost per Active User (SaaS). This unit-economics framing is a core principle behind scalable cloud platforms outlined in Be Cloud: The Next-Gen Platform for Scalable Business.

Eliminate Silent Storage Waste: Snapshots, Volumes, Expensive Tiers

Snapshots, unattached volumes, and objects sitting in premium storage classes are the unseen leeches of cloud bills. CIO Magazine reports 31% of IT leaders say over half their cloud money goes to waste, and silent storage plays a starring role.

Create an automated cleanup routine:

-

List unattached EBS volumes older than 14 days; email owners, then delete if unclaimed after a grace period.

-

Set lifecycle policies that migrate S3 objects to infrequent access after 30 days and to Glacier after 90.

-

Purge obsolete AMIs along with their snapshots.

Logs are the next candidate. Retain recent ones in hot storage for debugging, archive the rest to cold storage priced at cents per gigabyte.

For a down-to-earth checklist on audit, retention, and scheduling to cut storage waste (plus the basics of rightsizing and smart scaling), refer to The Cloud Cost Paradox: Why Migration Spikes Your Budget - And How a FinOps Solutions System Fixes It.

Storage Waste in the Real World

A fintech company found 7 TB of orphaned snapshots costing $6k per month. A one-time cleanup plus a lifecycle rule will save $72k annually, enough to cover their SOC 2 audit.

With compute, architecture, and storage optimized, the last puzzle piece is embedding continuous FinOps automation so the gains stick.

Build Continuous FinOps and Automation Loops

Optimization is not a project; it is a feedback loop embedded into every deployment.

Combine financial insight with engineering speed:

-

Integrate cost metrics into CI/CD checks so a pull request fails if projected monthly cost jumps more than 15%.

-

Hold monthly FinOps reviews where teams explain spikes shown on dashboards. The meeting should last 30 minutes and focus on actions, not blame.

-

Forward cost anomalies from monitoring tools directly into the incident management workflow so the same rigor used for uptime applies to overspend.

Organizations looking to embed FinOps discipline into daily workflows can get deeper tactical guidance by reviewing Be Cloud: The Next-Gen Platform for Scalable Business.

How Continuous FinOps Works in the Real World

A gaming studio built an internal Slack bot that watches AWS Cost Explorer. When daily spend exceeds a dynamic threshold, it tags the owner and opens a Jira ticket. Since launch, variance between forecast and actual spend stays within ±5%, down from ±22%.

Continuous FinOps completes the circle, turning the earlier steps from one-off wins into sustained savings and predictable budgets.

What Is Cloud Cost Optimization?

Cloud cost optimization is the continuous practice of analyzing, right-sizing, and automating cloud resources - compute, storage, and networking - so workloads meet performance targets at the lowest possible cost, without slowing developer delivery.

It is not a one-time cleanup but an ongoing discipline that combines visibility, ownership, and automation. Through mandatory tagging, showback dashboards, and automated guardrails, teams detect and correct overspend early while maintaining cloud elasticity and innovation.

At its core, cloud cost optimization is about spending smart, not simply spending less.

Conclusion

Cloud cost optimization is not a one-off initiative, but a continuous discipline embedded in how teams build, deploy, and operate software. It begins with clear cost visibility, advances through rightsizing and automated shutdowns, and matures with cloud-native architectures that scale to real demand rather than peak assumptions. Along the way, eliminating silent storage waste prevents savings from quietly leaking away.

When these practices are reinforced by continuous FinOps loops, cost control becomes proactive instead of reactive. Engineering leaders gain predictable budgets without sacrificing performance, reliability, or delivery speed. The outcome is not simply lower cloud bills, but a smarter, more resilient cloud operating model that supports sustainable growth.