Overview

Modern businesses need to deploy applications quickly, reliably, and at scale - something traditional infrastructure struggles to deliver. Containerization packages software so it runs consistently across environments, while orchestration platforms like Kubernetes automate deployment, scaling, and recovery. This article explains how these technologies eliminate environment issues, enable zero-downtime releases, and support business growth. It also explores the broader Kubernetes ecosystem and the role of managed service providers in helping organizations adopt cloud-native infrastructure without building large in-house DevOps teams.

The Legacy Trap: Why Monolithic Deployments Fail

For decades, applications were built as massive, monolithic structures where every component was tightly interwoven. If you needed to update the billing system, you had to redeploy the entire application. This created a high-stakes environment where a single error could take down the whole system. To make matters worse, scaling was a manual nightmare. If traffic spiked, IT administrators had to physically provision new servers or manually configure virtual machines, a process that was often too slow to capture the surge in demand.

The fragility of these legacy systems is the primary driver behind the shift to containerized systems. In a traditional setup, the application relies heavily on the specific configuration of the host server. If that server is updated or changed, the application breaks. This dependency creates a bottleneck for innovation because teams become afraid to touch the infrastructure. They prioritize stability over speed, which leaves the business unable to react quickly to market changes.

This operational drag is significant. Companies that stick to manual, monolithic deployments face:

-

Inconsistent environments where bugs appear only in production.

-

Slow release cycles caused by fear of breaking the monolith.

-

Resource waste from over-provisioning servers just to be safe.

To break this cycle, engineering leaders needed a way to decouple the application from the underlying hardware. They needed a standard unit of software that would run exactly the same way, regardless of where it was deployed.

When Traffic Spikes Become Business Risks

A mid-sized retail company used to host its e-commerce platform on a traditional monolithic server setup. During a Black Friday sale, traffic surged by 300%. The team tried to manually spin up additional virtual machines, but the configuration process took 45 minutes. By the time the new capacity was online, the site had already crashed, and thousands of customers had abandoned their carts. The lack of automated scaling and the heavy reliance on manual server management cost them substantial revenue.

The Solution: Docker Packaging and Kubernetes Orchestration

The industry response to these challenges came in two waves: first came the container, and then came the tool to manage it. Docker revolutionized software packaging by allowing developers to bundle an application with all its dependencies - libraries, configuration files, and runtimes - into a single, lightweight unit. This creates a "container" that runs identically on a developer’s MacBook, a testing server, or a cloud instance. The "works on my machine" problem effectively disappears because the machine’s environment no longer matters.

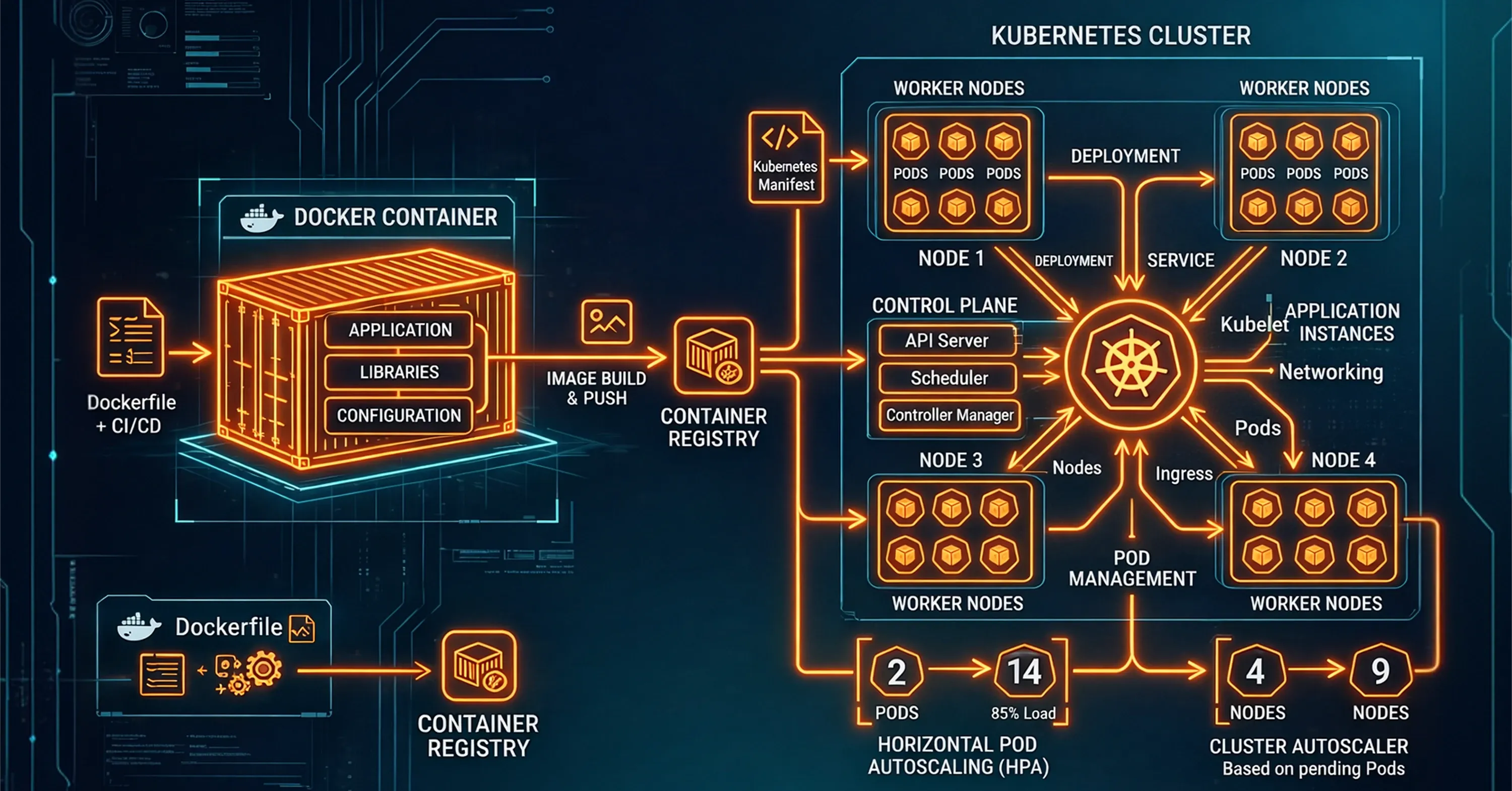

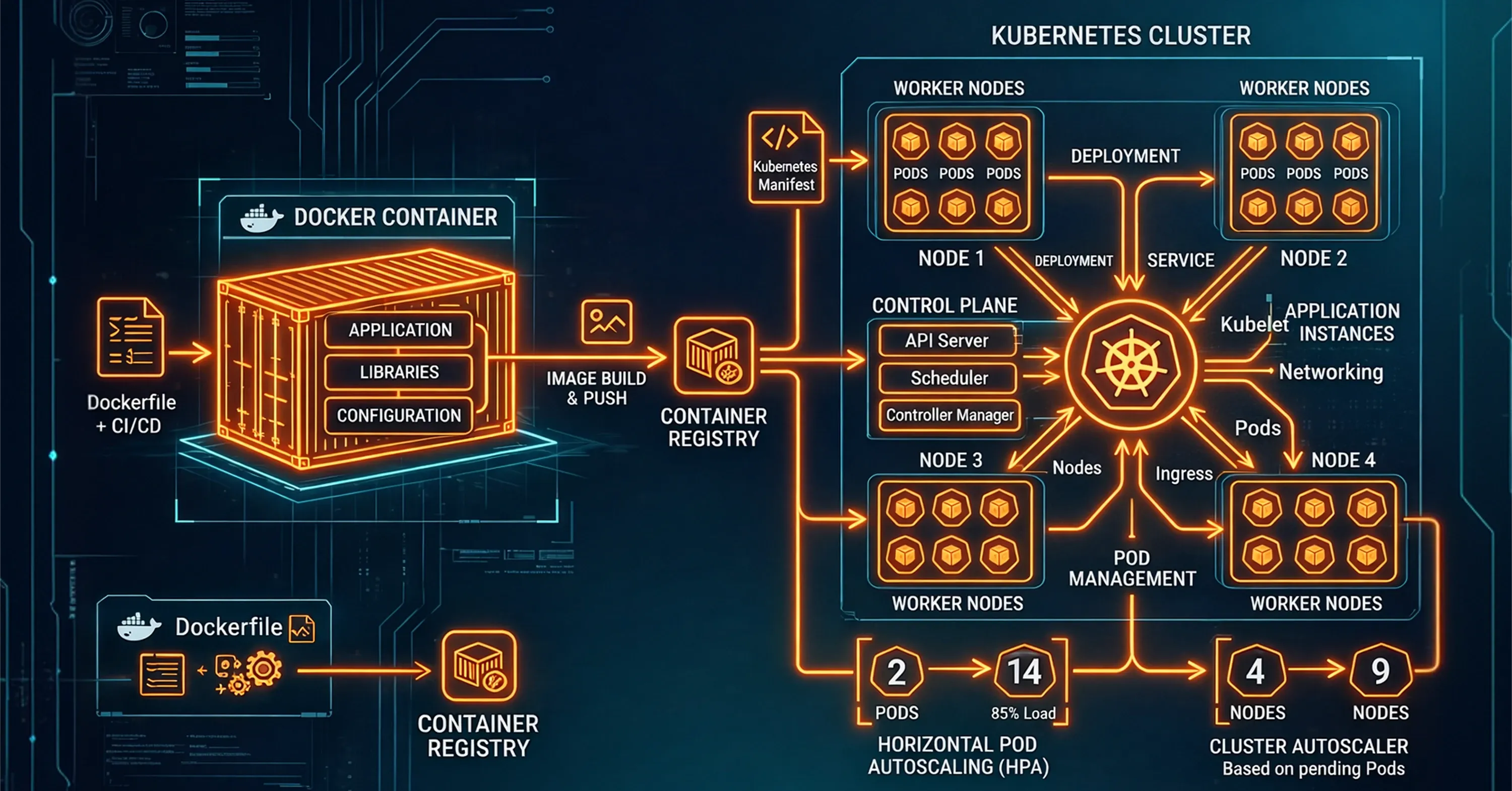

However, running a few containers is easy; managing thousands is impossible for a human. This is where containerization and orchestration tools work together. While Docker creates the package, Kubernetes (often abbreviated as K8s) manages the delivery. Think of Docker as the shipping container and Kubernetes as the crane and logistics system at the port. Kubernetes automates the deployment, scaling, and management of these containers. It monitors the health of applications and instantly restarts any container that fails, ensuring the system heals itself without human intervention.

The adoption of these tools is no longer experimental. Recent data shows that 98% of surveyed organizations reported they have adopted cloud native techniques to modernize their stack. Furthermore, Kubernetes has solidified its position as the market leader, with 82% of container users now running Kubernetes in production. This combination provides the robust infrastructure needed to support rapid development and high availability.

For a deeper look at how container orchestration and managed platforms can accelerate transformation, check out Top Cloud Sources Every Business Should Know.

From Monthly Releases to Daily Deployments

A regional fintech firm struggled with monthly release cycles that required weekend downtime. By migrating to Docker for packaging and Kubernetes for orchestration, they moved to a microservices architecture. This allowed them to update specific features, like their mobile check deposit service, independently of the core banking ledger. They now deploy updates daily with zero downtime, using Kubernetes to gradually roll out changes to a small subset of users before a full release.

Unpacking the Benefits of Containerization and Orchestration

When organizations successfully implement containerization and orchestration, the benefits extend far beyond the IT department. The primary advantage is speed. Developers can code and test locally in containers that mirror production, drastically reducing the time between writing a feature and getting it in front of customers. This agility is backed by data, as 94% of organizations report clear benefits from cloud-native applications or containers.

Beyond speed, these systems offer unmatched scalability and portability. Applications can scale up instantly during peak demand and scale down when traffic subsides, optimizing cloud costs. Additionally, containers are portable across different cloud providers and on-premise data centers. This flexibility is crucial for future-proofing, as experts note that 75% of all AI/ML deployments will use container technology by 2027. The ecosystem surrounding these tools is thriving, with the global container orchestration market projected to reach $1.02 billion in 2025.

To explore how elasticity and autoscaling support business growth, see Be Cloud: The Next-Gen Platform for Scalable Business.

Key benefits include:

-

Portability: Write code once and run it anywhere, from AWS to a private data center.

-

Efficiency: Containers share the host OS kernel, making them much lighter and faster to start than virtual machines.

-

Resilience: Orchestration tools automatically replace failed containers, maintaining service availability.

For practical advice on building resilient, scalable environments and the fundamentals of cloud infrastructure, explore What Is Cloud Infrastructure? A Beginner’s Guide to Cloud Computing.

The strategic value of this approach is echoed by industry leaders. Lee Caswell, a senior vice president at Nutanix, observed that 90% of organizations report some of their applications are containerized, highlighting how universal this shift has become.

The Kubernetes Ecosystem: Beyond Orchestration

Kubernetes provides powerful container orchestration, but production environments rely on a broader ecosystem of supporting tools. These components enhance deployment, networking, security, and monitoring, turning Kubernetes into a complete application platform.

Common additions include Helm for simplified deployments, service meshes like Istio or Linkerd for secure service communication, container registries for image storage, managed Kubernetes services such as EKS, AKS, or GKE, and observability tools like Prometheus or Grafana. Together, they enable reliable, scalable, enterprise-grade operations — but also add complexity that often requires specialized expertise to manage.

The MSP Value Add: Avoiding the DevOps Talent War

While the technology is powerful, mastering it is difficult. Kubernetes is notoriously complex to configure and secure. It requires deep expertise in networking, storage, and security policies. For many companies, building an in-house team to manage this infrastructure is prohibitively expensive. The demand for skilled professionals is intense, even as the ecosystem grows to 15.6 million cloud-native developers globally.

This is where a Managed Service Provider (MSP) becomes a strategic asset. By partnering with a leading provider of managed IT services, organizations gain immediate access to a team of certified Kubernetes experts without the overhead of recruiting and retaining full-time staff. An MSP provides 24/7 monitoring, security patching, and architectural guidance, ensuring that the container environment is robust and compliant.

For more perspective on managed cloud operations, seamless scaling, and consolidating your infrastructure, see Breaking the Infrastructure Bottleneck: The Cloud Solution Behind a Unified Approach.

Hiring an MSP solves several critical problems:

-

Cost Efficiency: You avoid the high salaries and recruitment fees associated with senior DevOps engineers.

-

Continuous Operations: MSPs offer round-the-clock support, which is difficult for a small in-house team to sustain.

-

Best Practices: Experts bring knowledge from hundreds of deployments, avoiding common pitfalls in security and scaling.

For companies that are modernizing legacy systems, an MSP acts as a bridge. They handle the "plumbing" of the infrastructure so the internal engineering team can focus entirely on building the product.

Scaling Without the Headcount

A logistics software company wanted to move their tracking platform to the cloud to handle holiday shipping volumes. They estimated they needed three senior DevOps engineers to build and maintain the Kubernetes cluster, which would cost over $450,000 annually. Instead, they hired a specialized MSP. The MSP migrated their legacy app to a containerized environment in three months and managed the infrastructure for a fraction of the cost of an internal team. The logistics firm successfully handled record volumes in December with zero downtime.

Containerization vs. Orchestration

Containerization acts like a standardized shipping box for software, wrapping the code and all its necessary files into a single lightweight package (like a Docker container) so it runs reliably on any computer. Orchestration is the automated traffic control system (like Kubernetes) that manages these boxes - scheduling where they go, restarting them if they crash, and scaling the number of boxes up or down based on customer demand.

Conclusion

The shift from fragile monolithic servers to robust containerized systems is not just a technical upgrade; it is a fundamental change in how businesses deliver value. By adopting containerization and orchestration tools, companies gain the ability to deploy faster, scale effortlessly, and reduce the risk of downtime. While the technology landscape is complex, you do not have to navigate it alone. Leveraging the expertise of a managed service provider allows you to harness the full power of cloud-native infrastructure while keeping your internal team focused on innovation.

Looking to unify your cloud tooling or gain expert support for rapid modernization? See how Managed IT Services can help your team accelerate transformation and reduce operational headaches.