What This Article Covers

This article will help you decide whether multi-cloud DevOps is right for your organization. You will learn what drives teams to distribute workloads across providers, how multi-cloud improves deployment frequency and resilience when done deliberately, what tools and practices separate mature execution from surface-level adoption, and where the complexity is real enough to demand careful planning and operational discipline.

What is multi-cloud DevOps?

Multi-cloud DevOps is the practice of distributing software delivery workloads across two or more cloud providers to improve deployment frequency, reduce release bottlenecks, strengthen failover readiness, and give teams more control over where specific workloads run best. It relies on automation, infrastructure as code, standardized CI/CD pipelines, and unified observability to maintain consistency and control across environments.

Why DevOps Teams Have Moved Beyond Single-Cloud Models

The shift toward multi-cloud is no longer theoretical. 70% of organizations now embrace hybrid cloud strategies, using at least one public and one private cloud, according to the Flexera 2025 State of the Cloud Report. That steady climb reflects something deeper than vendor diversification. DevOps teams are learning that different clouds do different things well, and matching workloads to the right environment can meaningfully improve both delivery speed and operational outcomes.

The reasons behind this shift tend to be practical rather than strategic in the abstract sense:

-

One cloud may offer better machine learning tooling for a data-intensive microservice.

-

Another might provide lower-latency networking in a region critical to a customer base.

-

A third could be the only provider with a compliance certification required for a regulated workload.

When teams limit themselves to a single platform, they often end up building workarounds for gaps that another provider has already solved. Multi-cloud removes that constraint and gives DevOps engineers the freedom to choose the most effective tool for each stage of the delivery pipeline.

This does not mean teams adopt multi-cloud for the sake of variety. It means they treat cloud providers like components of a broader system, each chosen for a specific operational reason. That mindset is what separates intentional multi-cloud strategies from accidental sprawl.

To dive deeper into the topic, see Multi-Cloud Strategy: Building a Winning Cloud Strategy for 2026 and Beyond.

Matching Workloads to the Right Cloud

A mid-sized financial services company runs its CI/CD pipelines on AWS because of deep integration with its existing container orchestration setup. At the same time, it deploys its customer-facing analytics dashboard on Google Cloud, where BigQuery offers faster query performance at lower cost. Neither workload would perform as well if forced onto the other platform, and the team manages both through a shared infrastructure-as-code framework.

How Multi-Cloud Accelerates Software Delivery

Speed is often the first benefit DevOps teams notice when they start distributing workloads across providers. But the gains are not automatic. They come from deliberate architectural choices that align each piece of the delivery pipeline with the cloud environment where it runs most efficiently.

For example, a team might provision ephemeral testing environments, meaning short-lived environments created on demand and destroyed after use, on whichever provider offers the fastest spin-up time in a given region. That alone can shave minutes off every CI/CD cycle. Multiply that by dozens of deployments per day, and the cumulative time savings become significant.

Multi-cloud also supports delivery speed through:

-

Environment specialization: Staging on one cloud, production on another, each tuned for its purpose.

-

Parallel deployment pipelines: Pushing updates to multiple regions simultaneously using different providers.

-

Reduced bottlenecks: Avoiding single-provider rate limits or capacity constraints during peak deployment windows.

The key enabler here is automation. Without infrastructure as code (IaC), standardized CI/CD templates, and automated provisioning, managing multiple clouds would slow teams down rather than speed them up. Tools like Terraform, Pulumi, and cloud-agnostic Kubernetes configurations allow teams to define infrastructure once and deploy it consistently across providers.

Nearly half of all workloads and data now reside in the public cloud, which means the pipeline itself, not just the application, increasingly lives in multi-cloud territory. Teams that automate aggressively across those environments are the ones delivering faster.

For a step-by-step breakdown of building automated cloud pipelines, tech stacks, and hybrid DevOps workflows, see Tech-Driven DevOps: How Automation is Changing Deployment.

Multi-Cloud Deployment in Practice

A SaaS company serving both U.S. and European markets runs its production deployments on Azure in Europe for data residency compliance, while using AWS in North America for faster content delivery. Both environments are provisioned through the same Terraform modules, which means a single merge to the main branch triggers parallel, provider-specific deployments without manual intervention.

Building Resilience Through Distributed Cloud Operations

Speed matters, but so does reliability. One of the strongest arguments for multi-cloud in a DevOps context is resilience. When your entire operation depends on a single provider, any outage, whether regional or service-specific, can halt deployments and affect production traffic.

Distributing workloads across providers creates natural failover paths. If one cloud experiences degraded performance, traffic can shift to another without requiring a full disaster recovery event. This is not just theoretical. Teams that architect for multi-cloud resilience treat it as a core part of their deployment strategy, not an afterthought.

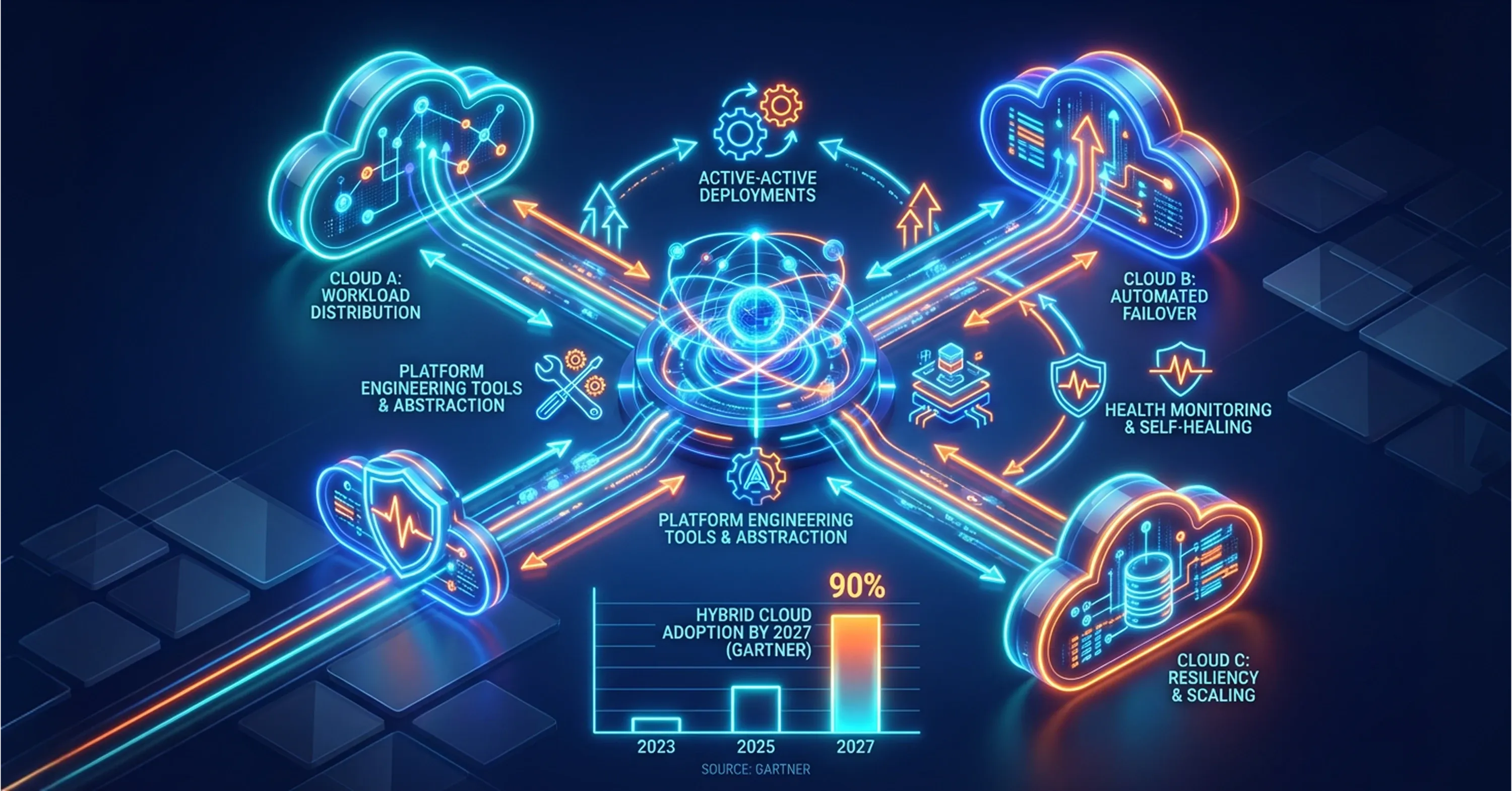

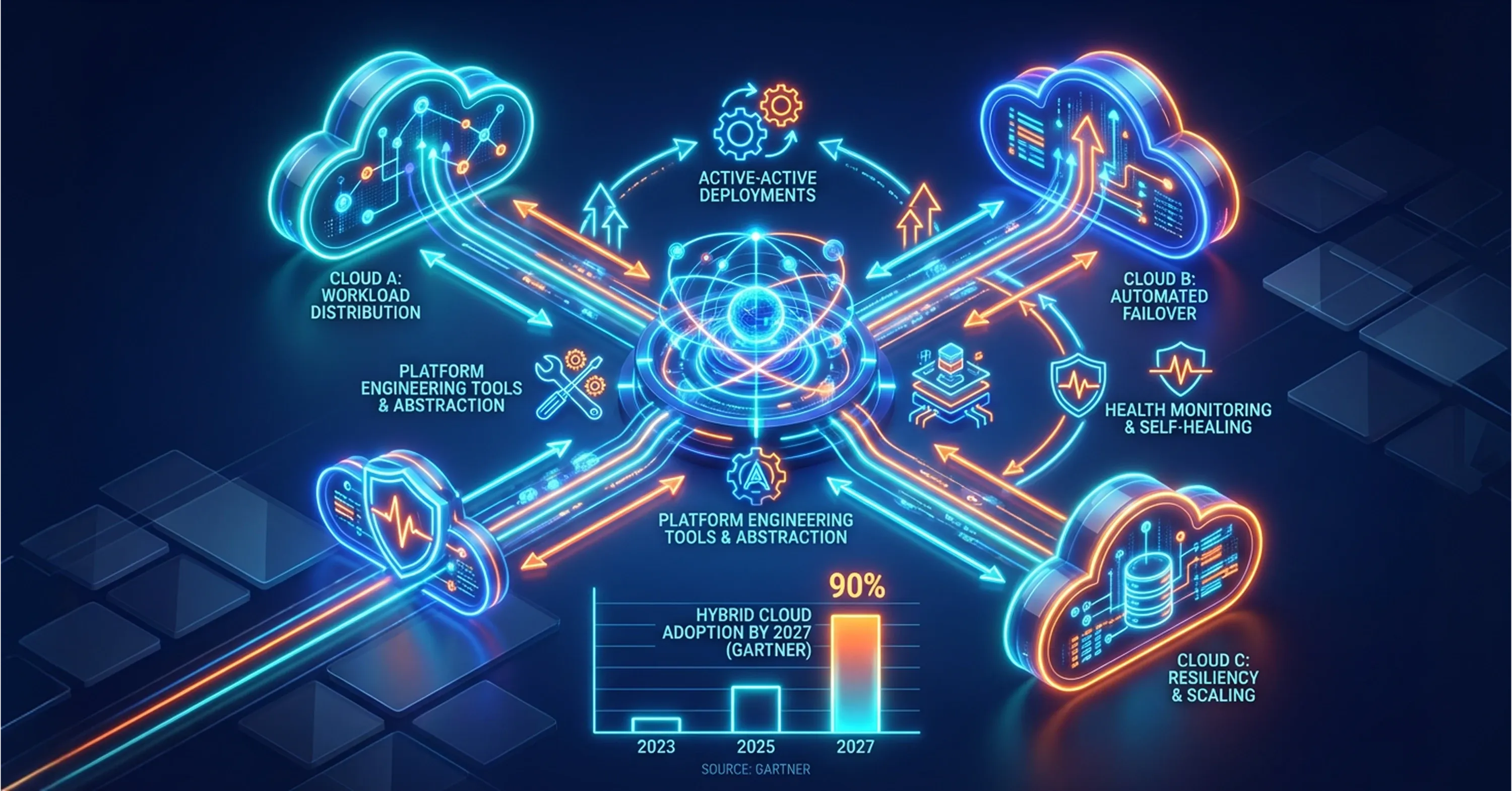

Effective resilience in a multi-cloud setup depends on several practices:

-

Active-active deployments: Running the same service on two or more clouds so traffic can be rerouted instantly.

-

Cross-cloud health monitoring: Using observability platforms that provide a unified view of performance across providers.

-

Automated failover logic: Defining policies that detect failures and redirect workloads without human intervention.

Gartner forecasts that 90% of organizations will adopt a hybrid cloud approach through 2027, and resilience is a major driver behind that trajectory. While hybrid cloud combines private and public infrastructure, multi-cloud goes further - distributing workloads across two or more public cloud providers to eliminate single points of failure and maximize deployment flexibility. For DevOps teams specifically, resilience is not just about uptime. It is about maintaining the ability to deploy continuously, even when parts of the infrastructure experience issues.

This is also where the relationship between DevOps and platform engineering becomes important. Platform teams increasingly build internal developer platforms that abstract provider-specific details, giving DevOps engineers a consistent interface for deploying to any cloud. That abstraction layer makes resilience patterns easier to implement and maintain.

The Real Complexity Behind Multi-Cloud DevOps

Multi-cloud is not a free upgrade. Every additional provider adds operational surface area, and without disciplined management, the complexity can erode the very benefits teams set out to gain. This is the part of the conversation that often gets underplayed, but it matters enormously for long-term success.

The most common challenges include:

-

Fragmented visibility: Monitoring tools that work well on one cloud may not cover another, creating blind spots.

-

Inconsistent security posture: Identity management, access controls, and encryption standards can vary across providers, increasing risk.

-

Governance drift: Policies defined for one environment may not translate cleanly to another, leading to configuration inconsistencies.

-

Cost management difficulty: 84% of surveyed organizations say managing cloud spend is their top cloud challenge, and multi-cloud only makes that harder without centralized cost tracking.

Tooling fragmentation is particularly painful for DevOps teams. A CI/CD pipeline that runs smoothly on one provider may require significant adaptation for another. Container runtimes, networking configurations, and secret management all behave differently across clouds. Without standardization at the tooling and process level, teams spend more time adapting to environments than delivering software.

For a blueprint on rapid rightsizing, idle resource audits, and continuous cost control across multi-cloud environments, review Cloud Cost Optimization: How to Cut Costs and Improve Cloud Performance.

This is precisely why 60% of organizations now use managed service providers to manage cloud spending. Partners like ABS, which specialize in infrastructure management, cloud computing, and cybersecurity, help organizations maintain operational control across complex multi-cloud environments without overburdening internal teams. Similarly, 59% of organizations use or plan to use a dedicated FinOps team to bring financial discipline to cloud operations, an approach that is essentially required when budgets span multiple providers.

The takeaway is straightforward: multi-cloud improves delivery speed and resilience only when it is paired with architectural discipline, automation maturity, and clear operational ownership. Without those foundations, it introduces more problems than it solves.

What Separates Mature Multi-Cloud DevOps from Surface-Level Adoption

The difference between teams that succeed with multi-cloud and those that struggle comes down to a few core principles. Mature teams treat multi-cloud as an engineering discipline, not a procurement decision.

Mature multi-cloud DevOps practices share these characteristics:

-

Infrastructure is defined in code and version-controlled, regardless of the target cloud. Explore more in Infrastructure as Code (IaC): How Infrastructure as Code Automates Cloud Deployments.

-

CI/CD pipelines are provider-agnostic at the orchestration layer, with provider-specific steps isolated and well-documented.

-

Observability spans all environments through a unified platform, giving teams a single view of deployments, errors, and performance. For guidance on robust monitoring and observability, see CI/CD Monitoring: Continuous Monitoring for Performance, Security, and Compliance.

-

Security policies are codified and enforced automatically, not applied manually per environment.

-

Cost allocation is tracked at the workload level, not just the account level, enabling accurate chargeback and optimization.

Teams that check these boxes tend to treat each cloud as a deployment target within a broader system, not as a separate operational silo. That architectural consistency is what allows them to move fast without losing control.

Organizations that lack this maturity often end up with "multi-cloud in name only," meaning they have workloads on multiple providers but manage each one independently, with separate tools, separate processes, and separate teams. That fragmentation negates most of the speed and resilience advantages that motivated the multi-cloud move in the first place.

Conclusion

The way DevOps teams use the cloud has fundamentally changed. What once was a hosting decision is now a delivery strategy, with teams deliberately distributing workloads across providers to gain speed, resilience, and operational flexibility. But multi-cloud success is not about how many providers you use. It is about how consistently and deliberately you manage them. The teams that get this right treat automation, observability, and governance as non-negotiable foundations, not optional extras. Those that skip the discipline end up with complexity that slows them down rather than cloud infrastructure that propels them forward.