Overview

If your organization is already using multiple clouds but still lacks cost control, governance consistency, and workload portability, this is the operating model you need next.

You will see:

-

Why multi-provider environments dominate and why they are not going away

-

The pillars of a fiscally precise, resilient, and agile architecture

-

How a “cloud economist and orchestrator” role aligns technology choice with financial discipline

-

Real-world examples from companies that refined sprawling estates into flexible portfolios

By the end, CIOs, CTOs, and FinOps leaders will have a clear blueprint for steering complex estates toward long-term architectural freedom.

In practice, many organizations discover that multi-cloud introduces operational complexity faster than expected. Cloud bills rise unpredictably, tooling becomes duplicated across AWS, Azure, and GCP, and identity or security policies diverge between environments. Teams often lack unified visibility across providers, while workloads remain placed historically rather than strategically. Disaster recovery across clouds is frequently weak, leaving already overloaded platform teams responsible for managing growing operational complexity.

From Migration to Maturation: The New Cloud Strategy Conversation

First, consider the market signals. Public cloud spend is still soaring, projected to hit $723.4 billion in 2025. Yet modernization pressure is intense: 82% of buyers say their environment needs an overhaul. Those numbers confirm what enterprise architects feel daily. Standing up workloads is no longer enough.

A mature multi-cloud posture recognizes three big shifts:

Together these shifts push strategy beyond migration tasks to recurring economic and architectural decisions. Multi-cloud is increasingly an operating challenge, not just an architecture choice. Many organizations arrive at multi-cloud accidentally, as different teams adopt different providers for AI, analytics, or regional workloads. The result is often fragmented governance, inconsistent security policies, and limited cost visibility. Successful strategies therefore treat multi-cloud as an operating model - defining clear governance, financial control, and orchestration across providers.

This distinction highlights the difference between accidental and intentional multi-cloud.

Accidental environments emerge organically as teams adopt different providers independently, while intentional multi-cloud strategies are designed from the start with clear governance, cost control, and workload placement policies.

Without this strategic approach, multi-cloud initiatives commonly fail due to fragmented tooling, inconsistent IAM policies, limited visibility, and weak cross-cloud disaster recovery. For this reason, many enterprises rely on managed multi-cloud services that provide centralized governance, financial optimization, and orchestration across providers.

A mature approach therefore targets continuous rightsizing, governance, and provider neutrality rather than one-time moves. For an in-depth exploration of how multi-cloud models offer both flexibility and fresh challenges - such as inconsistent IAM, cost tracking, and compliance - see Balancing Cloud Computing and Cloud Security: Best Practices.

Pillar 1: Modular Architecture for Resilience and Choice

A modular architecture slices applications into logical domains (for example: data, stateless services, AI inference) that can map independently to the cloud with the best fit.

Key components include:

-

Service abstractions: container orchestration, service mesh, and API gateways hide provider specifics.

-

Data portability: platform-agnostic formats (Parquet, Avro) and replication pipelines reduce storage hostage risk.

-

Decoupled identity: centralized IAM that federates to each provider prevents platform-specific privilege trees.

-

Automated infrastructure as code (IaC): Terraform, Pulumi, or Crossplane templates create repeatable builds across vendors.

Benefits surface quickly:

-

Faster adoption of emerging features without full re-platforming

-

Boundary-level failover rather than full-application outages

-

Negotiating leverage with each hyperscaler

Once modules can move, resilience patterns add confidence:

-

Active-active data clusters across two regions or clouds

-

Pilot-light disaster recovery on a lower-cost provider

-

Real-time observability that treats every cloud as a node in one fabric

Together they let teams shift workloads for cost, latency, or regulatory demands in hours, not quarters.

If you want to see how elastic infrastructure, robust connectivity, and unified observability drive the next generation of cloud architectures, review Be Cloud: The Next-Gen Platform for Scalable Business.

Ending thought: Modularity supplies the mechanical freedom needed for financial agility, our next focus.

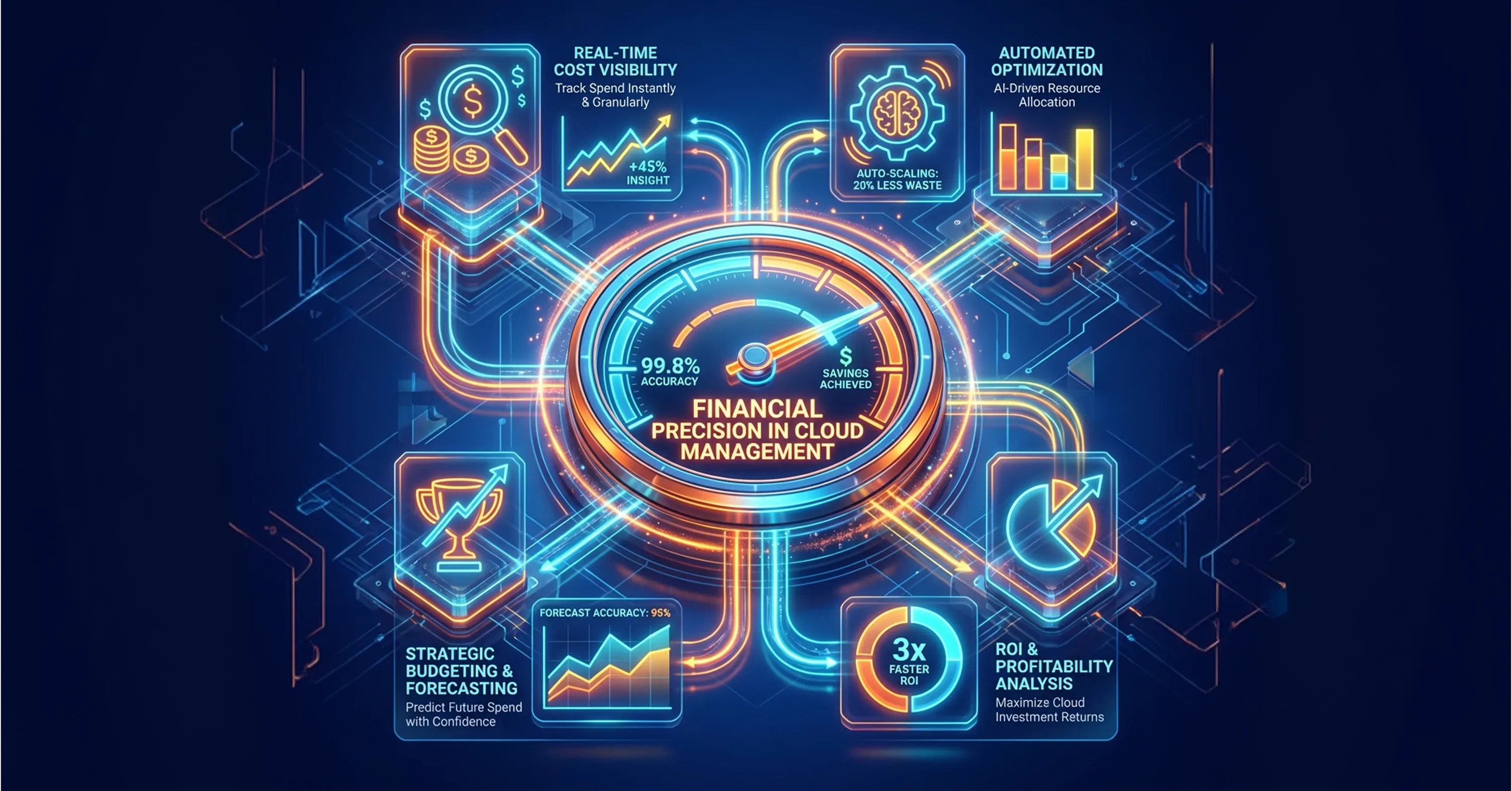

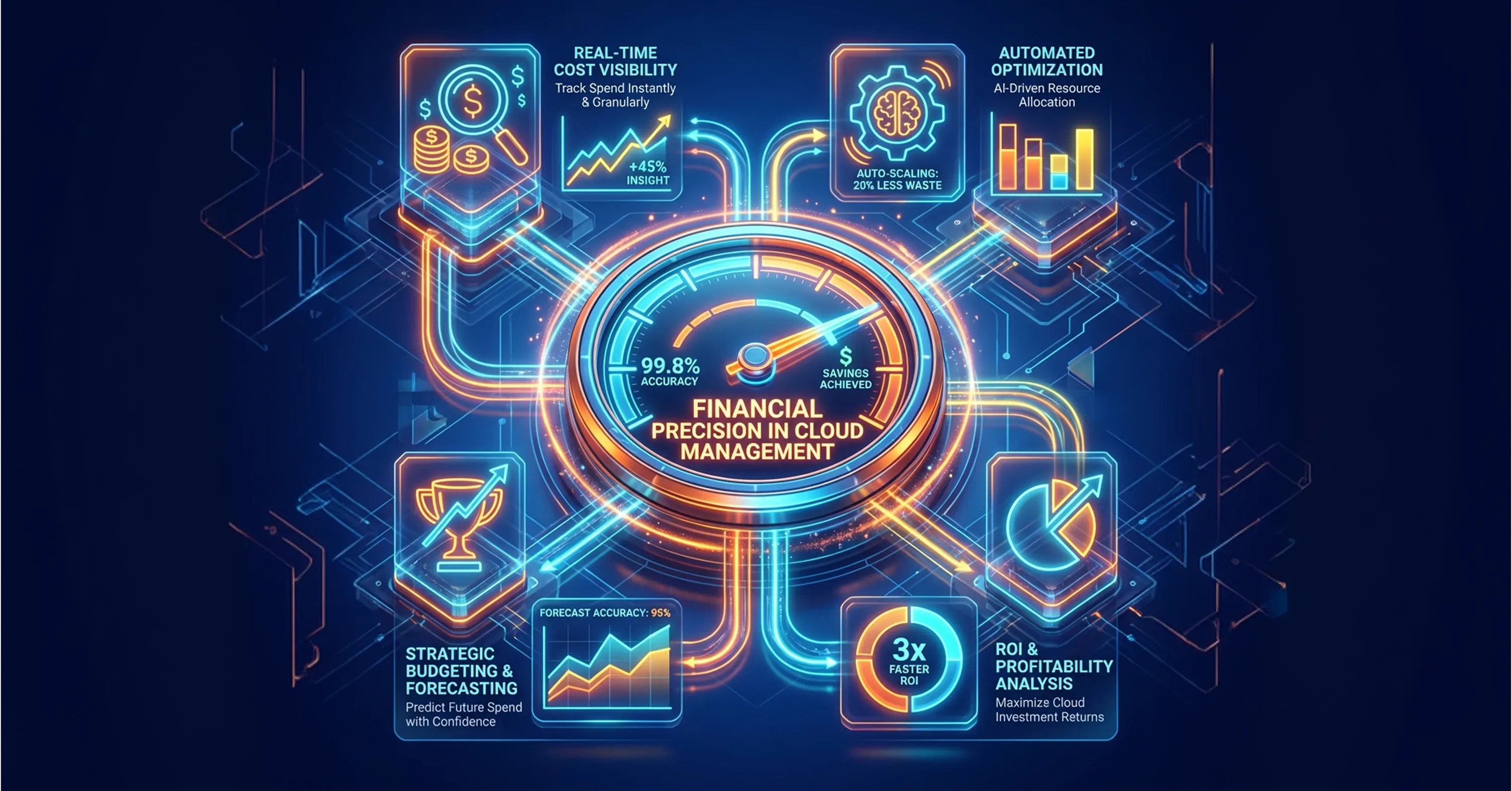

Pillar 2: Financial Precision - Acting as Your Cloud Economist

Modularity unlocks movement, but movement must be profitable. FinOps, the practice of aligning cloud spending with business value, anchors this discipline.

A “cloud economist and orchestrator” steward owns three mandates:

-

Measure: unified cost telemetry across providers, normalized to common tags

-

Model: scenario analysis for usage spikes, commitment purchases, and reserved capacity

-

Act: automate rightsizing, schedule shutdowns, commit purchases, or migrate when savings exceed switching friction

Why is this crucial?

Practical levers the economist pulls:

-

Purchase optimization

-

Match long-lived workloads to savings plans or reserved instances.

-

Hedge compute portfolios: reserve 60 % on your primary provider, keep 40 % flexible across clouds.

-

Workload placement

-

Showback and chargeback

-

Tag costs to products, then expose dashboards to business unit leaders weekly.

-

Gamify savings goals: teams keep 30% of every dollar they save to fund innovation features.

For hands-on strategies to connect engineering and finance, optimize daily cloud spend, and embed automation into your FinOps feedback loops, see Cloud Cost Optimization: How to Cut Costs and Improve Cloud Performance.

Concluding link: Fiscal precision feeds decision loops that drive orchestration, covered next.

Eliminating Idle Cloud Spend Through FinOps Automation

A North American retailer built a FinOps dashboard consolidating Azure, AWS, and Google Cloud invoices. The FinOps lead discovered idle dev/test environments accounting for 22% of monthly spend. Automated sleep schedules trimmed $3.4 million annually, money the CTO reallocated to customer-facing AI pilots.

Pillar 3: Cloud Orchestration for Continuous Optimization

Financial governance flags the “why,” orchestration tools handle the “how” at runtime.

Core orchestration layers:

-

Deployment orchestrators: Kubernetes, Nomad, or ECS schedule workloads across clusters.

-

Policy engines: Open Policy Agent or HashiCorp Sentinel enforce guardrails at commit time.

-

Workflow automation: GitOps pipelines or event-driven functions trigger builds, tests, and deploys as code changes.

-

Cross-cloud traffic steering: DNS-based routing, global load balancers, or layer-7 mesh direct users to the closest or healthiest replica.

Decision signals - latency, cost, carbon footprint, compliance rules - feed into placement algorithms. For example:

-

Redirect GPU inference to the lowest-cost spot pool under 40 ms latency.

-

Failover to a secondary provider during regional outages.

-

Shift EU consumer data to EU-only regions to satisfy GDPR policy.

The orchestrator must surface exceptions quickly. Observability stacks aggregate metrics, logs, and traces from every cloud into a single pane.

For practical advice on consolidating cloud platforms, unifying controls across vendors, and enabling seamless policy and automation, investigate Breaking the Infrastructure Bottleneck: The Cloud Solution Behind a Unified Approach.

Finally, human process sits above tools:

-

Weekly triage of anomalies

-

Monthly architecture councils to approve new cloud services

-

Quarterly business reviews aligning spend to revenue targets

Takeaway: orchestration operationalizes both agility and economics.

Holiday Surge Resilience Through Automated Cross-Cloud Failover

During the 2024 holiday surge, an e-commerce platform ran into GPU shortages on its primary provider. Its orchestrator policy shifted real-time recommendation engines to a secondary cloud’s GPUs within 12 minutes, keeping page-load times under 200 ms and protecting $28 million in projected revenue.

What Is a Mature Multi Cloud Strategy?

A mature multi cloud strategy is a continuous management approach where workloads, data, and identities are broken into modular components, orchestrated across two or more cloud providers, and governed by real-time financial controls. The goal is to maximize resilience and innovation while maintaining vendor independence and predictable cost.

The MSP as Your Cloud Economist and Orchestrator

Designing a mature multi-cloud architecture is only the first step. Operating it continuously - across providers, regions, services, pricing models, and regulatory constraints - requires specialized expertise and constant attention. Few organizations maintain the in-house talent, tooling, and governance maturity to perform this role at scale.

This is where a Managed Service Provider acting as a cloud economist and orchestrator becomes essential.

Rather than managing infrastructure provider by provider, the MSP operates the estate as a single economic and technical system. Its mandate is not just uptime, but sustained architectural agility and financial discipline.

Key responsibilities include:

-

Continuous cost optimization across providers, commitments, and spot markets

-

Independent workload placement decisions based on performance, price, and compliance

-

Cross-cloud governance and policy enforcement

-

Vendor-neutral architecture guidance that prevents lock-in

-

Rapid adoption of new hyperscaler capabilities without destabilizing core systems

An effective orchestrator also strengthens negotiating leverage. With portable workloads and real-time visibility into alternatives, enterprises are not forced into unfavorable pricing or long-term technical dependencies.

Equally important is operational continuity. Multi-cloud environments generate massive telemetry streams, policy events, and configuration drift. A dedicated orchestrator monitors these signals, resolves anomalies, and continuously tunes placement decisions so the estate evolves with business needs.

The result is a cloud platform that behaves less like a collection of vendor contracts and more like a managed portfolio - resilient, adaptable, and economically aligned with organizational goals.

As a cloud economist and orchestrator, the MSP empowers organizations to maintain total architectural freedom while systematically harvesting the best-of-breed capabilities unique to each hyperscaler.

Conclusion

Cloud migration solved the first challenge: getting to the cloud. The next decade will be defined by how effectively organizations operate within it. A mature multi cloud strategy shifts the focus from one-time adoption to continuous optimization, enabling enterprises to balance innovation, cost control, and architectural flexibility.

By combining modular design, disciplined cloud economics, and automated orchestration, organizations can transform fragmented environments into a resilient, adaptable platform for long-term growth. This approach allows teams to adopt new technologies without disruptive replatforming, respond quickly to regulatory demands, and redirect spending toward strategic initiatives.

Ultimately, a well-executed multi cloud strategy turns the cloud from a collection of vendors into a dynamic portfolio of capabilities - one that evolves alongside business priorities and positions the enterprise to capture future opportunities with confidence.

If your organization is navigating the complexity of multi-cloud environments and looking to improve cost control, governance, and workload portability, consider discussing your architecture with an experienced cloud strategy team. Book a call to explore how a structured multi-cloud operating model can help you build a more resilient and cost-efficient cloud environment.