Overview

This article explains why DevOps solutions have become the operating framework behind modern product development and continuous innovation. You'll learn how DevOps accelerates delivery through CI/CD pipelines, removes friction between teams, supports automation across the software lifecycle, and creates the conditions for ongoing experimentation. More importantly, you'll see what actually changes when organizations adopt these practices, what goes wrong, and what the trade-offs look like in practice.

How DevOps Creates the Foundation for Faster Innovation

Innovation depends on speed, but not reckless speed. It requires the ability to test hypotheses, ship changes, gather feedback, and iterate, all without destabilizing production systems. DevOps solutions provide this foundation by connecting development, testing, infrastructure, and deployment into a single integrated workflow.

Before DevOps, most organizations relied on siloed teams and sequential handoffs. Developers wrote code, then passed it to operations for deployment, often weeks or months later. This fragmented model made experimentation slow and expensive. DevOps replaces that fragmentation with shared ownership, automated pipelines, and continuous feedback loops.

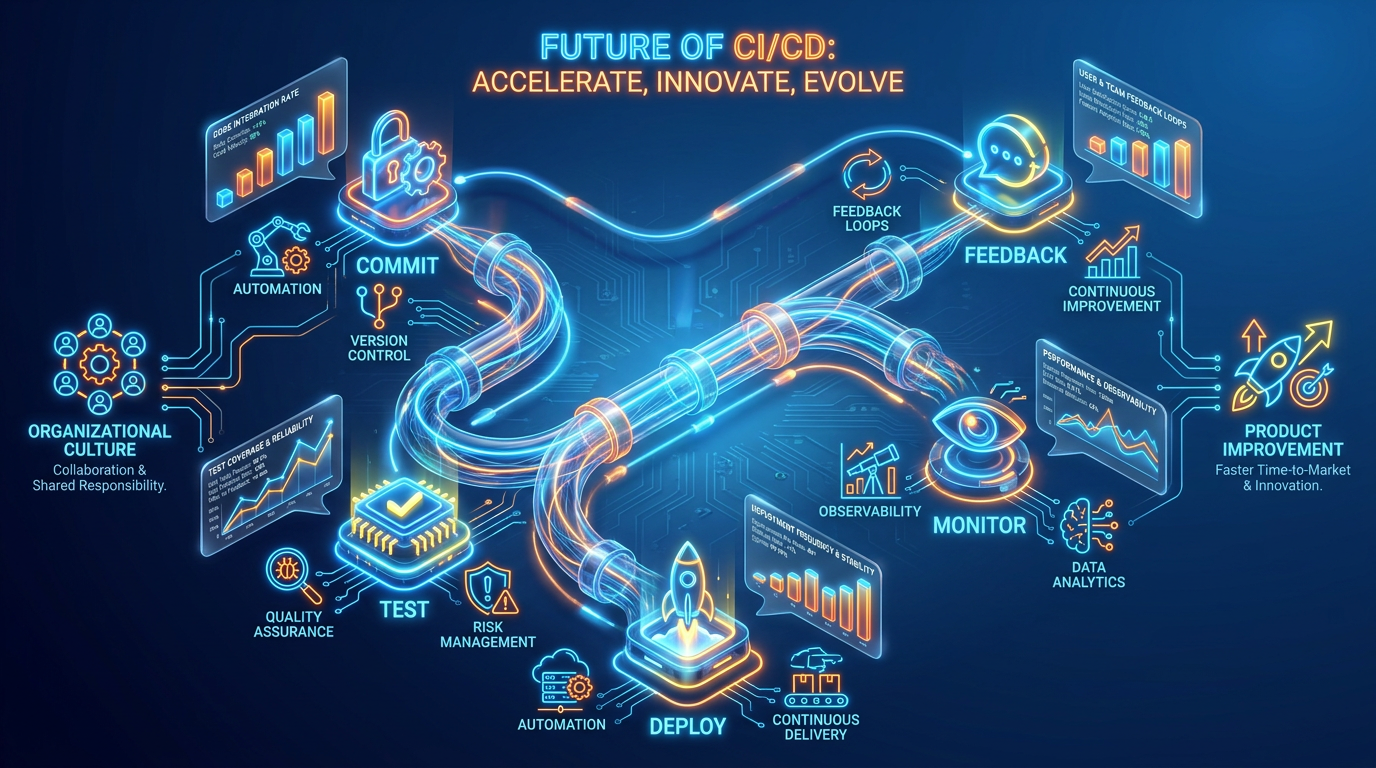

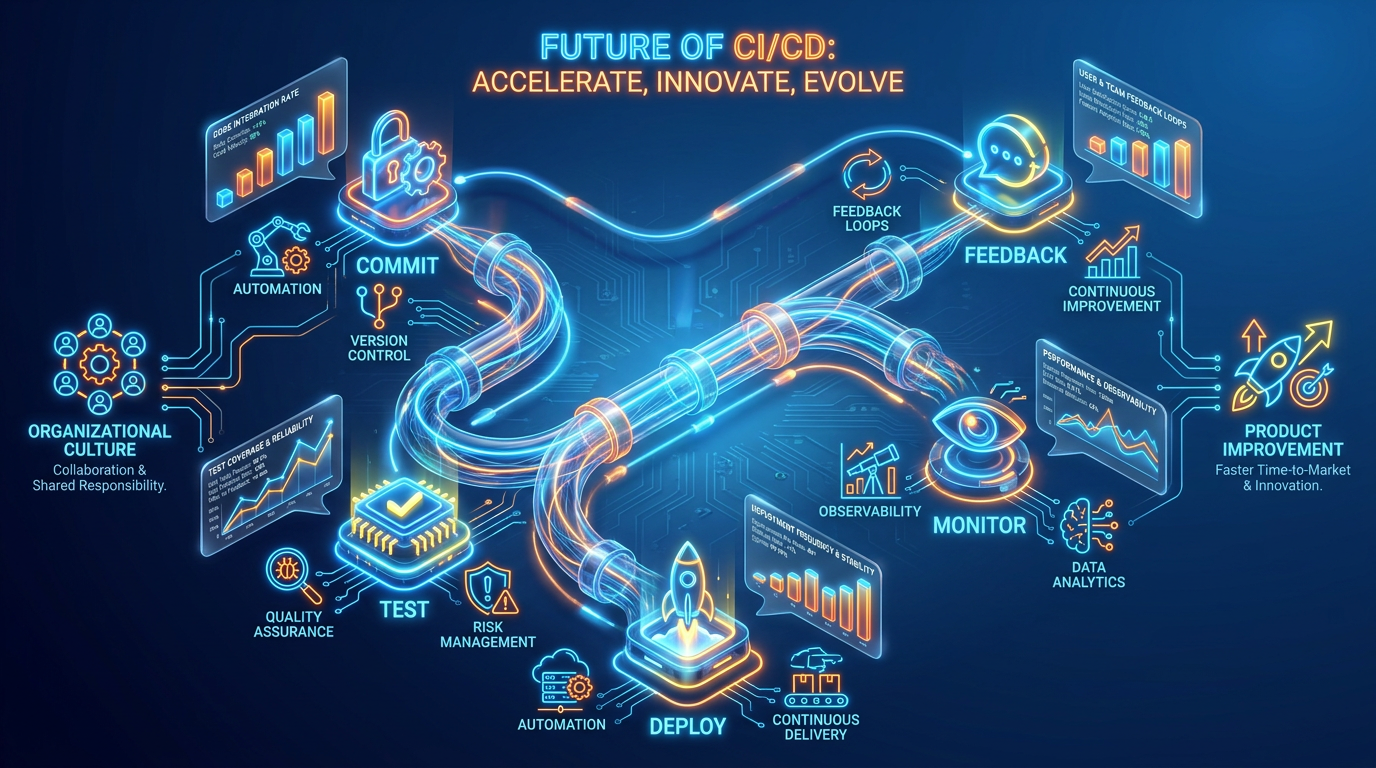

The core capabilities that make this possible include:

-

Continuous Integration/Continuous Delivery (CI/CD): Automated pipelines that build, test, and deploy code changes multiple times per day. Mature teams track this through deployment frequency and change failure rate. DORA benchmarks target several deploys per day with a change failure rate below 15%. For a deeper dive into how automation drives these benefits, see CI/CD Automation: How CI/CD Pipeline Automation Powers Modern Software Delivery.

-

Infrastructure as Code (IaC): Managing servers and environments through version-controlled configuration files rather than manual setup. This also enables drift detection: when running infrastructure diverges from its declared state, teams catch it before it causes incidents. To see how automation, drift remediation, and governance come together, read Infrastructure as Code (IaC): How Infrastructure as Code Automates Cloud Deployments.

-

Automated testing and monitoring: Catching defects early and tracking system health in real time so teams can respond to issues before users notice them.

-

Cross-functional collaboration: Breaking down walls between development, operations, security, and quality assurance teams.

Together, these capabilities reduce the cost of change. When change is cheap and safe, teams experiment more freely.

What organizations typically underestimate is how much this shifts ownership. Developers become responsible for operability, not just functionality. Operations engineers start reviewing code. Security teams must engage earlier in the pipeline rather than gatekeeping at the end. These role shifts create real friction during adoption. Teams accustomed to clean handoff boundaries suddenly share accountability for uptime, and not everyone adjusts smoothly. The gain is speed and shared context. The cost is organizational discomfort and a longer ramp-up period than most roadmaps account for.

How DevOps Translates into Measurable Business Impact

Nationwide, the financial services company, illustrates this well. After implementing DevOps practices, the organization achieved a 70% reduction in system downtime and a 50% improvement in code quality. Those results didn't come from a single tool purchase. They came from rethinking how teams collaborate, automate, and deliver software across the entire lifecycle.

This connection between delivery speed and product quality is precisely why DevOps has moved beyond engineering teams and into strategic planning conversations.

Why Continuous Delivery Is a Strategic Advantage

Shipping faster matters, but what really drives innovation is the ability to ship continuously and learn from each release. DevOps solutions make this possible by shortening the distance between an idea and its real-world impact.

Consider what happens in a traditional release cycle. Teams bundle dozens of features into quarterly releases, making each deployment high-risk and difficult to troubleshoot. If something breaks, isolating the cause is painful. If users don't like a feature, feedback arrives months too late.

DevOps flips this model. With CI/CD, organizations can deploy small, incremental changes multiple times a day. Each release carries less risk, and each one generates faster feedback. This creates a rhythm of continuous improvement rather than slow, batched change.

The strategic outcomes are measurable:

-

Faster time to market: New features and fixes reach users in hours or days, not months. Elite performers, per DORA metrics, measure lead time for changes in under one hour.

-

Higher deployment confidence: Automated testing and rollback capabilities reduce fear of failure. Teams should track rollback rate explicitly. If more than 5-10% of deployments require rollback, the pipeline has gaps.

-

Tighter feedback loops: Real-time monitoring and observability tools let teams see how changes perform immediately, though telemetry governance matters here. Raw data volume is not insight, and the cost of storing and processing telemetry has become a line item worth managing.

-

Greater responsiveness: When market conditions shift or customers report issues, teams can act quickly. MTTR (mean time to recovery) is the metric that captures this. Elite teams recover in under one hour; most organizations should aim to get below four.

Organizations that combine DevOps with standardized and fully virtualized infrastructure have seen IT costs drop by as much as 25%, freeing up budget that can be reinvested in product development and experimentation. For practical insight into how these platforms enable continuous delivery and cost management, discover From Pipelines to Platforms: How Cloud Fuels DevOps Innovation.

From High-Frequency Releases to Continuous Learning Systems

Streaming platforms like Netflix and Spotify deploy code thousands of times per day. This isn't recklessness. It's disciplined delivery supported by automated pipelines, feature flags, and canary deployments. Each release is small, testable, and reversible. The result is a product that evolves continuously based on real user behavior, not assumptions made in a planning meeting six months ago.

This kind of delivery cadence is increasingly accessible to mid-size organizations that invest in the right combination of culture, process, and automation. But getting there requires something most tool-selection conversations skip entirely: changing how teams work together. One signal that's easy to miss: your cloud delivery model outpacing your security model. This happens gradually. Deployment frequency increases. New services spin up faster. But security reviews, threat modeling, and vulnerability remediation cycles don't scale at the same rate. The gap shows up as findings that sit unresolved for weeks, SBOMs that are generated but never reviewed, and incident post-mortems that keep surfacing the same class of misconfiguration. If your mean time to remediate a critical vulnerability is longer than your mean deployment interval, your delivery speed has exceeded your security capacity. That's the point where moving fast stops being a competitive advantage and starts being a liability exposure.

Beyond Tooling: Culture, Process, and Shared Ownership

It's tempting to treat DevOps as a tooling decision, a matter of selecting the right CI/CD platform or container orchestration system. The organizations that extract the most value from DevOps understand that tools are only one layer. The combination of automation, process discipline, shared ownership, and a delivery culture built around continuous improvement is what produces results.

In practice, this means:

-

Development and operations teams share responsibility for system reliability, not just feature delivery.

-

Incident response becomes a learning process, with blameless post-mortems that improve future resilience.

-

Automation eliminates repetitive manual work, freeing engineers to focus on creative problem-solving.

-

Security is integrated early in the pipeline ("shift-left"), rather than applied as a last-minute gate. This includes generating and maintaining a Software Bill of Materials (SBOM) so teams know what's in their software and where it came from. Supply chain attacks are no longer theoretical; they're a recurring reality.

For pragmatic guidance on breaking down silos, building shared platforms, and creating golden paths for developers, explore Tech DevOps: The Core Engine Behind Agile Businesses.

What commonly goes wrong here: teams adopt the tooling layer of DevOps without changing incentive structures or team boundaries. A CI/CD pipeline that still requires three manual approvals and a change advisory board meeting isn't continuous delivery. It's the old process with newer tools. Similarly, organizations often "shift left" on security by adding a scanning step to the pipeline but never assigning clear ownership of remediation. Scanners generate findings. Findings sit in a backlog. Nothing changes.

The trade-off is real. Shared ownership means slower initial decision-making because more people are involved. It means on-call rotations for developers who previously handed off pager duty. It means security engineers embedded in product teams, which dilutes the depth of a centralized security function. These costs are worth paying, but they should be budgeted for explicitly.

Automation Guardrails and AI-Generated Code

As organizations increase automation, guardrails become essential. Policy-as-code frameworks (Open Policy Agent, Sentinel, Kyverno) allow teams to define what's allowed in infrastructure and deployments as version-controlled rules rather than tribal knowledge or manual checklists. Drift detection tools flag when running environments diverge from declared state. Automated rollback triggers revert deployments when error rates or latency exceed defined thresholds.

If you're looking for hands-on examples of embedding DevSecOps and compliance automation directly into your pipelines, check out DevSecOps Explained: How to Build Security into Every Stage of Development.

This matters more now than it did two years ago because AI-generated code is entering pipelines at scale. Engineers use LLM-based tools to write infrastructure configurations, application logic, and even security policies. The productivity gain is real, but so is the risk. AI-generated code can introduce subtle vulnerabilities, outdated dependency references, or configurations that pass static analysis but behave unpredictably at runtime. Teams need LLM scanning and review processes that treat AI-generated code with the same rigor as third-party dependencies. Runtime application self-protection (RASP) adds another layer by catching exploitation attempts in production that pre-deploy scanning missed entirely.

Providers like ABS, which deliver managed IT services spanning infrastructure management, cloud computing, and cybersecurity, understand this well. Scalable DevOps operations require more than pipeline configuration. They require integrated delivery models that balance speed with long-term resilience and visibility. To see how managed IT services support this journey, visit Managed IT Services.

From Manual Deployments to Scalable DevOps: How Mid-Size Teams Unlock Continuous Delivery

A mid-size e-commerce company migrating from manual deployments to a DevOps model might start by automating its build and test processes, then gradually introduce infrastructure as code, then implement monitoring dashboards that give both developers and operations staff a shared view of system health. Each step removes a bottleneck. Over twelve months, the company moves from monthly releases to daily deployments, with fewer production incidents and faster recovery times. The teams that succeed at this typically designate a platform engineering group to maintain golden paths: pre-approved, well-documented templates for common tasks like provisioning a new service, setting up a CI/CD pipeline, or configuring monitoring. Without golden paths, every team reinvents the wheel and accumulates configuration drift.

DevOps solutions are integrated practices, tools, and cultural principles that unify software development and IT operations, enabling organizations to deliver products faster, more reliably, and with continuous feedback loops that drive ongoing innovation.

What Good Looks Like in Practice

A mature DevOps environment isn't defined by the tools it runs. It's defined by the outcomes it produces consistently.

Here's what that looks like across six dimensions:

-

Secure-by-default deployments. Security controls are baked into pipeline templates, not added manually per project. Every new service starts with least-privilege access, encrypted secrets management, and dependency scanning enabled out of the box.

-

Reusable IaC templates. Teams provision infrastructure from a shared library of tested, policy-compliant modules. No team writes infrastructure from scratch. Drift between environments is caught automatically, not discovered during incidents.

-

Automated policy checks in CI/CD. Policy-as-code frameworks (OPA, Sentinel, Kyverno) enforce compliance requirements at pipeline runtime. Violations block deployment. Exceptions require documented approval, not a workaround.

-

Centralized telemetry with cost controls. Logs, metrics, and traces flow into a single observability platform. Retention policies and sampling rules keep costs manageable. Engineers can answer "what broke and why" without jumping between five dashboards.

-

Joint DevOps/security metrics. Security and engineering teams track shared KPIs: mean time to remediate vulnerabilities, SBOM coverage, percentage of pipelines with active RASP instrumentation. Separate scorecards mean separate incentives - and separate blind spots.

-

Clear ownership of exceptions and incidents. Every runbook names a responsible team. Every open exception has an owner and a resolution deadline. Blameless post-mortems produce documented action items, not just conversation.

If more than two of these are missing or inconsistent across your teams, your DevOps practice is still in the tooling phase - not the operating model phase.

What to Do Next

If your organization already runs CI/CD pipelines but still treats DevOps as a tooling layer, the highest-value next step is an honest assessment of ownership and process gaps. Ask these questions:

-

Who owns remediation when a security scan finds a vulnerability? If the answer is unclear, your shift-left effort is incomplete.

-

What is your change failure rate, and do you track it? If you can't answer, you're flying blind on deployment quality.

-

Do you have golden paths for common workflows, or does every team build its own? If the latter, you're accumulating drift and duplicated effort across the organization.

-

How are you governing AI-generated code entering your pipelines? If there's no review process distinct from human-authored code, you have an unmanaged risk surface.

The organizations that get the most from DevOps in 2025 and beyond are not the ones with the most sophisticated toolchains. They are the ones that have clear ownership models, automated guardrails with policy-as-code, and platform engineering teams that reduce cognitive load for every developer shipping code. Prioritize those three investments. The measurable payoff is fewer failed deployments, faster MTTR, lower cloud waste, and audit readiness that doesn't require a fire drill.

Conclusion

DevOps is no longer a technical upgrade. It’s an operating model for how modern companies build, ship, and improve products under real market pressure. The organizations that win are not simply moving faster, they are reducing the cost and risk of change so dramatically that continuous innovation becomes a default state, not an exception. What matters is not whether you have a CI/CD pipeline, but whether your system actually supports fast, safe decision-making. That means clear ownership, automated guardrails, measurable performance, and a culture that treats every deployment as a learning loop. Without those elements, DevOps becomes surface-level tooling layered on top of legacy processes.

The shift is uncomfortable, and the trade-offs are real. But the alternative is slower releases, higher failure risk, and teams that spend more time managing friction than delivering value.

The companies pulling ahead today are the ones that made DevOps a business capability, not an engineering initiative.