Why Traditional DevOps Approaches Are Hitting a Ceiling

The growth of cloud spending tells part of the story. Public cloud spending is predicted to exceed 45% of all enterprise IT spending by 2026, up from less than 17% in 2021. As organizations push more workloads into cloud environments, the complexity of managing those environments has grown faster than most DevOps teams can keep up with.

The symptoms are familiar to anyone running cloud infrastructure at scale:

-

Too many disconnected tools across CI/CD, monitoring, security scanning, and infrastructure provisioning

-

Unclear ownership of services, especially when multiple teams deploy to the same cloud accounts

-

Slow incident investigation because telemetry data lives in separate dashboards that don't talk to each other

-

Rising observability costs as data volumes increase without clear strategies for what to collect and retain

-

Delivery friction caused by poorly standardized deployment workflows that vary team by team

These aren't niche problems. They're the direct result of scaling DevOps without scaling the underlying operating model. When every team builds its own pipeline, chooses its own monitoring stack, and manages its own infrastructure definitions, the organization ends up with dozens of "DevOps implementations" but no coherent system.

The path forward requires consolidation, not more tools. Leading organizations are responding by treating platforms, automation, security, and observability as connected layers of one cloud operating model.

How Platform Engineering Reduces Operational Drag

A mid-sized fintech company running over 200 microservices across three cloud providers found that its engineering teams were spending roughly 30% of their time on deployment configuration and debugging pipeline failures. After consolidating onto an internal developer platform with standardized templates, deployment lead times dropped by 40% and on-call escalations fell significantly within the first quarter.

This kind of operational strain is exactly what's pushing teams toward platform engineering, the trend that's redefining how DevOps scales.

Platform Engineering as the Foundation for Scalable DevOps

Platform engineering is the practice of building internal platforms that abstract away infrastructure complexity and give development teams self-service access to standardized tools, environments, and deployment workflows. Think of it as building an internal product for your own engineers.

The idea isn't new, but its urgency is. As cloud environments become more distributed, the cognitive load on developers has increased dramatically. Engineers who should be writing application code are instead wrestling with Kubernetes configurations, cloud IAM policies, and CI/CD pipeline YAML. Platform engineering addresses this by creating a curated layer between developers and raw infrastructure.

A well-built internal developer platform typically includes:

-

Golden paths for deploying services, meaning pre-configured templates that encode organizational standards for security, logging, and scaling

-

Self-service provisioning for environments, databases, and other dependencies

-

Built-in policy enforcement so governance happens automatically rather than through manual review gates

-

Integrated observability so developers can see the health and performance of their services without configuring monitoring from scratch

The key principle is reducing friction without removing control. The metrics that prove this model works are specific: deployment frequency, lead time for changes, change failure rate, and MTTR (mean time to recovery) - the four DORA metrics - alongside observability cost per workload, policy violation rate, rollback rate, and developer self-service adoption. Organizations that can't measure these have no baseline for improvement. Platform teams don't dictate how developers write code. They standardize how code gets built, tested, deployed, and observed in production. This distinction matters because it preserves developer velocity while ensuring operational consistency.

Ownership needs to be explicit.

Platform teams own the internal developer platform, golden paths, deployment templates, and observability infrastructure. Application teams own service definitions, what telemetry matters for their services, and how they consume platform capabilities. Security teams own policy-as-code frameworks and supply chain controls -not manual review gates. SRE/operations own reliability targets, incident response workflows, and the feedback loop between production signals and platform improvements.

Blurred ownership is one of the most common reasons platform engineering initiatives stall.

This model also carries real costs. Building an internal developer platform requires sustained investment in engineering time, tooling, and organizational alignment. Rollout friction is common: developers accustomed to custom setups resist standardization. Cultural resistance can slow adoption more than any technical challenge. And over-centralization is a genuine risk - when platform teams control too much, they become bottlenecks. The architecture has to balance standardization with enough flexibility that teams aren't fighting the platform to ship.

For a deep dive into this evolution, see Tech DevOps: The Core Engine Behind Agile Businesses for real-world approaches to breaking down silos and increasing engineering flow.

Maturity Matters: Where You Are Shapes What to Build Next

Not all cloud-first organizations are at the same stage. Understanding where you are matters before deciding what to build next.

Early stage: Teams manage infrastructure manually, pipelines vary by team, and there are no shared standards for deployment, logging, or security. Governance happens through reviews, not automation.

Mid stage: Some golden paths exist, but adoption is inconsistent. Platform teams are forming but still reactive. Observability is partially unified. Policy enforcement is manual or tool-specific.

Mature stage: A fully governed internal developer platform handles self-service provisioning, standardized deployment, telemetry governance, and AI guardrails. Platform teams operate as product teams with SLAs. Security and compliance are embedded by default.

Most enterprises today sit between early and mid. The gap to mature isn't a tooling problem - it's an operating model problem.

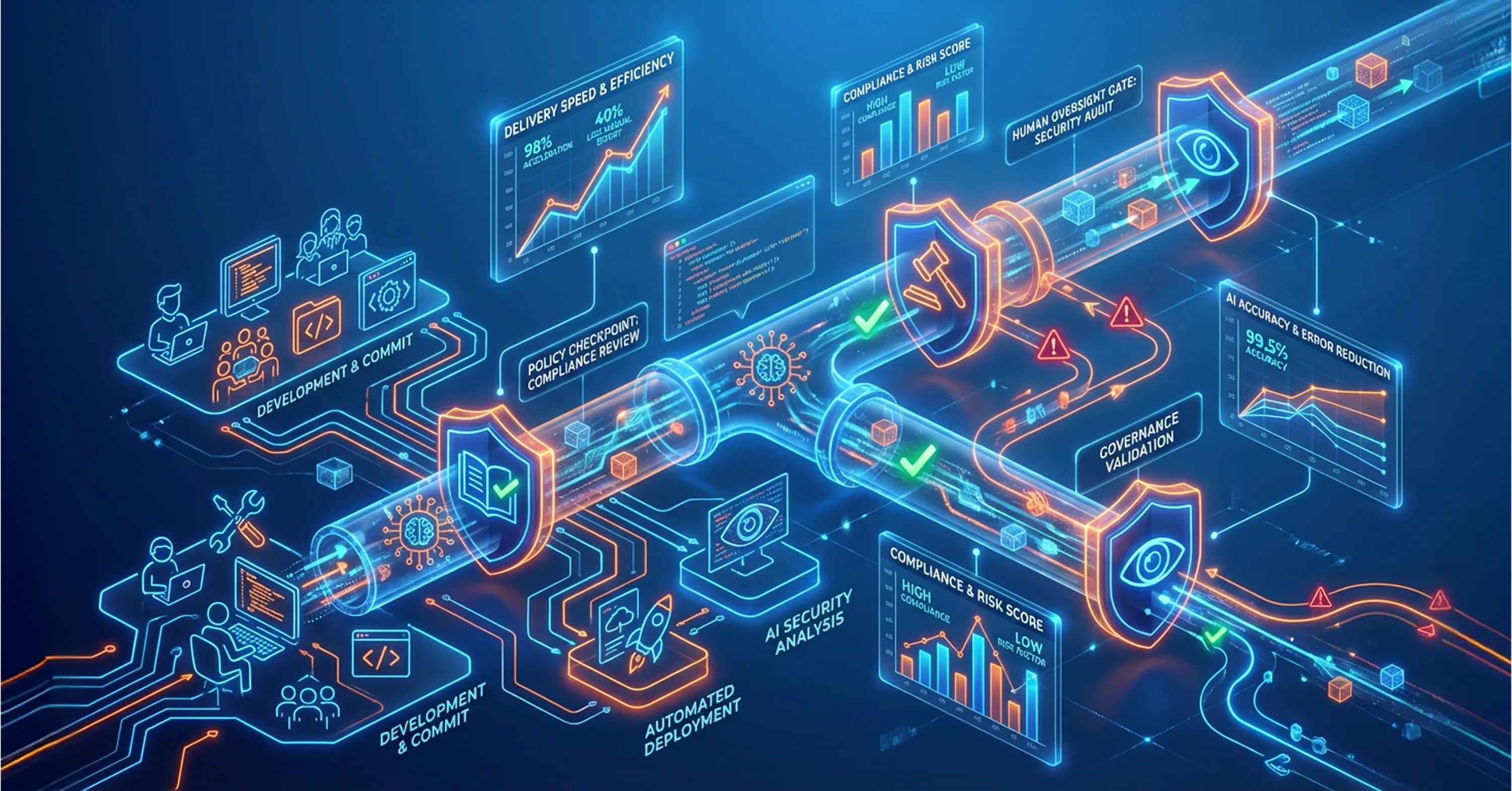

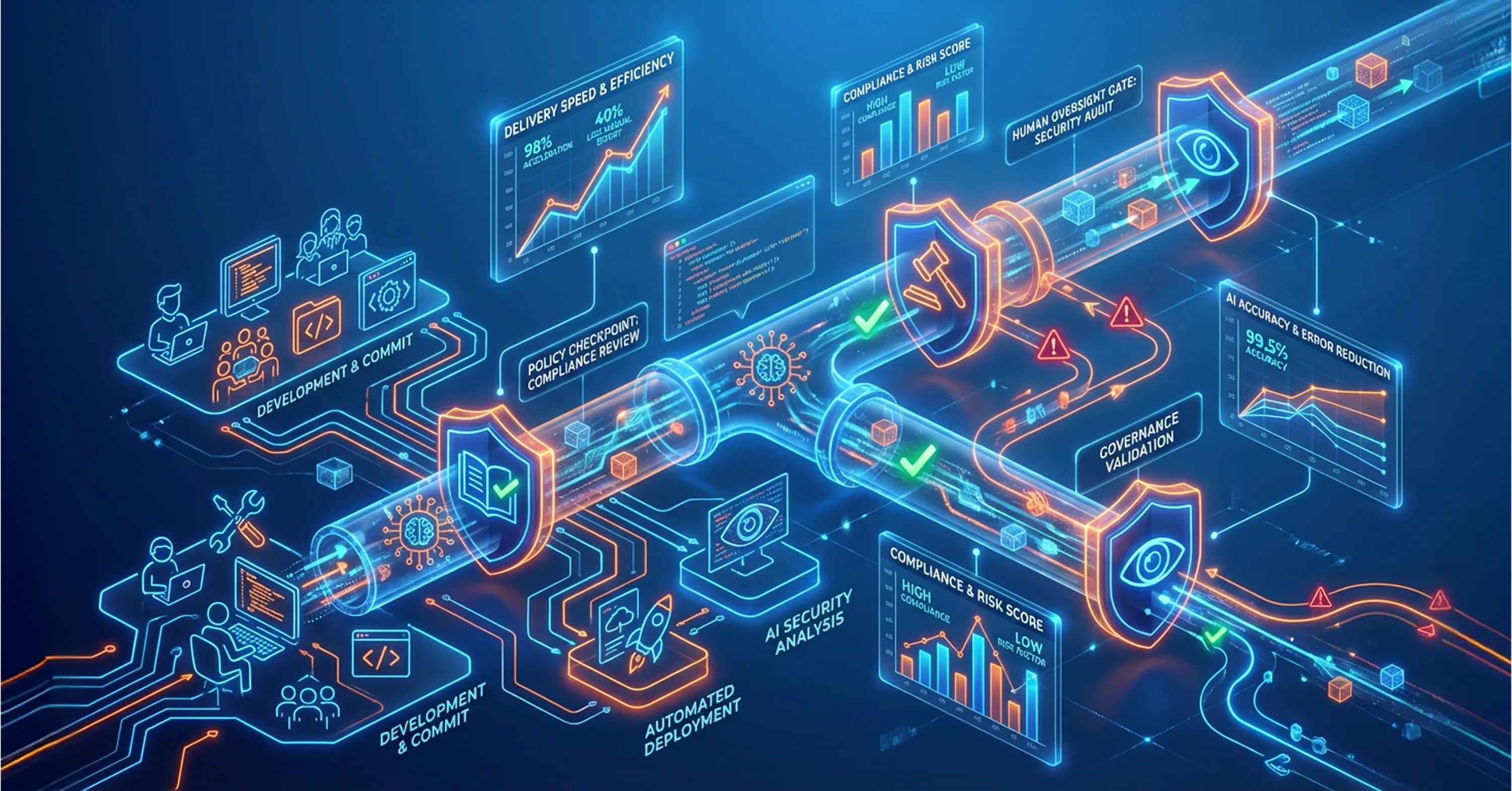

AI in Software Delivery: Opportunity Meets Governance

AI is already changing how software gets written, tested, and deployed. Code assistants generate boilerplate. AI-powered testing tools identify edge cases. Automated analysis helps triage production incidents. But the more important shift is still ahead.

By 2030, 70% of developers will partner with autonomous AI agents, shifting human developers toward planning, design, and orchestration. In parallel, 70% of organizations will embed AI agents in DevOps and DevSecOps pipelines to execute development and security workflows. The scale of investment is massive: agentic AI is projected to account for $1.3 trillion in IT spending by 2029.

This isn't a distant future. Teams are already integrating AI into their delivery pipelines. But speed without governance creates new risks. AI-generated code can introduce subtle vulnerabilities. Automated deployments driven by AI agents need clear boundaries and human oversight.

The organizations getting this right are pairing AI adoption with:

-

Explicit policies defining what AI agents can and cannot do in production pipelines

-

Human-in-the-loop checkpoints for high-risk changes, such as infrastructure modifications or security policy updates

-

Measurement frameworks that track not just delivery speed but also defect rates, rollback frequency, and security findings post-deployment

The goal is to let AI accelerate routine work while keeping humans in charge of decisions that carry operational or security risk. If you're interested in practical guidance for embedding automation and governance safely into the pipeline, Tech-Driven DevOps: How Automation is Changing Deployment breaks down release automation and deployment patterns, along with strategies for integrating security guardrails.

This balance becomes especially critical as the API management space is expected to grow nearly five times larger by 2032, driven primarily by AI adoption, which means more integration points, more automated interactions, and more surface area to govern.

How AI Governance Becomes Essential in Regulated Environments

A healthcare SaaS provider introduced AI-assisted code review into its CI pipeline. While the tool caught common issues faster than manual review, the team discovered it occasionally approved code patterns that violated HIPAA data-handling requirements. They responded by adding a policy-as-code layer that automatically flagged healthcare-specific compliance concerns before any AI-approved change could merge. The result was faster reviews with stronger compliance coverage.

Governing AI-driven delivery is one challenge. But you can't govern what you can't see, which is why unified observability is the next critical layer.

Unified Observability: From Fragmented Dashboards to Connected Telemetry

Observability, the ability to understand what's happening inside your systems by examining their outputs, has long been a pillar of DevOps. But in practice, most organizations still operate with fragmented monitoring: one tool for logs, another for metrics, a third for traces, often from different vendors with different data models.

This fragmentation creates real problems. When an incident occurs, engineers waste time correlating data across tools instead of diagnosing the issue. As data volumes grow, costs spiral because there's no unified strategy for what telemetry to collect, sample, or discard.

The industry is responding with a clear move toward vendor-neutral, standards-based observability. OpenTelemetry, a CNCF project that provides a single set of APIs and libraries for collecting traces, metrics, and logs, has become the leading framework for this shift. CNCF research on observability trends confirms that modern cloud operations increasingly depend on unified, cost-aware telemetry rather than fragmented dashboards.

For comprehensive guidance on operational monitoring, cost optimization, and aligning your observability platform with performance and compliance objectives, see CI/CD Monitoring: Continuous Monitoring for Performance, Security, and Compliance.

Mature observability strategies share several characteristics:

-

A single telemetry pipeline that collects traces, metrics, and logs in a consistent format across all services

-

Cost controls built into the pipeline, such as intelligent sampling and tiered storage, so observability spending scales predictably

-

Correlation capabilities that let engineers move from an alert to the relevant traces, logs, and deployment events without switching tools

-

Ownership clarity, where platform teams manage the observability infrastructure and application teams define what matters for their services

For organizations managing complex cloud environments, a managed IT services partner with deep expertise in infrastructure management and cloud operations can help design and maintain these observability systems, especially when internal teams are already stretched thin across multiple priorities.

DevSecOps as a Connected System, Not a Checkbox

DevSecOps, the practice of integrating security into every stage of the software delivery lifecycle, has been a goal for years. But too often it still looks like a security scanning tool inserted into a CI pipeline, generating alerts that developers ignore because they lack context or relevance.

The next evolution of DevSecOps treats security, infrastructure automation, policy enforcement, and software delivery as one system.

This means:

-

Infrastructure-as-code definitions that include security policies by default, so a new cloud resource can't be provisioned without encryption, access controls, and logging

-

Policy-as-code frameworks (such as Open Policy Agent) that evaluate every deployment against organizational security and compliance rules automatically

-

Supply chain security controls, including software bill of materials (SBOM) generation and dependency scanning, integrated directly into the build process

-

GitOps workflows where all infrastructure and policy changes flow through version-controlled repositories, creating a complete audit trail

For a thorough exploration of embedding DevSecOps and automating compliance at scale, see DevSecOps Explained: How to Build Security into Every Stage of Development.

IT teams already spend nearly a third of their time building custom integrations between systems. When security is one more disconnected layer, it adds to that integration burden. When it's woven into the platform, it becomes invisible in the best way: always present, never a bottleneck.

The data center and cloud infrastructure market continues to expand rapidly. The data center market alone is projected to exceed $400 billion by 2028, growing nearly 10% annually. As this infrastructure scales, so does the attack surface. Security that depends on manual review gates simply won't keep up.

What Organizations Usually Get Wrong

Even well-funded teams hit the same failure modes.

Too many bespoke pipelines. Every team builds its own CI/CD setup. Standardization never happens because no one owns it. The result is dozens of pipelines with dozens of different failure modes.

AI without guardrails. Teams that adopt AI tooling without policy-as-code controls in the control plane discover the consequences in production.

Observability without cost controls. Without intelligent sampling and tiered storage, telemetry governance breaks down and spending grows faster than the budget can absorb.

DevSecOps as scanners only. A policy enforcement layer that generates ignored alerts isn't DevSecOps. Security has to be embedded in the platform itself, not bolted onto the pipeline.

Platform teams as bottlenecks. When platform teams become approval gatekeepers instead of capability builders, they recreate the friction they were meant to eliminate.

Conclusion

The future of DevOps in cloud-first enterprises is operational, not theoretical. What's next for DevOps in cloud computing isn't a single technology or trend. It's a shift toward treating platforms, AI-assisted delivery, observability, and security as connected layers of one coherent system.

Organizations that continue to manage these as separate functions will face growing complexity, slower delivery, and higher costs. Those that build integrated cloud operating models, where platform engineering reduces cognitive load, AI accelerates routine work with proper oversight, observability provides unified visibility, and security is embedded by design, will be positioned to deliver software faster and more safely at scale.

To further explore the practical underpinnings and tech stacks powering this evolution, see What Does a DevOps Specialist Do? Roles, Skills, and Responsibilities Explained.

The next frontier isn't about doing more. It's about building the structure that makes everything work together.

For most enterprises, the starting point is sequenced: platform engineering first to reduce delivery fragmentation, then observability consolidation to unify visibility and control costs, then policy automation to embed security and compliance by default, and finally AI governance controls to ensure AI-assisted delivery accelerates work without introducing new operational risk.