Overview

The cloud ecosystem is not a single platform or product. It is a layered, interdependent environment where hyperscale providers, open-source frameworks, APIs, containers, and automation tools work in concert to support how modern software is built, deployed, and scaled. Each layer serves a distinct function. Hyperscalers like AWS, Azure, and GCP supply the foundational compute and managed services. APIs enable communication between systems and power the as-a-service model. Open-source technologies such as Kubernetes and Terraform provide portability and reduce vendor lock-in. Containers standardize how applications are packaged and deployed. And platform services, CI/CD pipelines, and observability tools make it all operable at enterprise scale.

But understanding what the ecosystem is made of is only half the picture. The harder question is why it becomes difficult to manage. Each additional layer increases operational complexity. Enterprises routinely struggle to make these components work together consistently, securely, and at scale. And without a deliberate operating model, the ecosystem becomes a source of friction rather than velocity. This is where platform engineering and managed infrastructure services play a decisive role: turning a collection of powerful tools into a coherent, governable system.

This article breaks down each of these sources, explains how they interact, and shows what it takes to turn a complex cloud environment into a resilient, high-performing operation.

Hyperscale Cloud Providers Form the Foundation

Any conversation about the cloud ecosystem starts with the hyperscale providers: Amazon Web Services (AWS), Microsoft Azure, and Google Cloud Platform (GCP). These companies operate the massive global data center networks that supply the raw compute, storage, and networking resources most organizations depend on. But their role goes well beyond hosting. They offer hundreds of managed services, from databases and machine learning platforms to identity management and edge computing, that form the baseline infrastructure for nearly every modern application.

The physical scale behind these providers is staggering. In the United States alone, about 70% of data center growth is expected to be fulfilled directly or indirectly by hyperscalers by 2030, with many new facilities self-built or delivered through built-to-suit co-location arrangements. Providing the additional capacity the market needs will require more than $500 billion in data center infrastructure investment in the US by the end of the decade.

What makes hyperscalers a true ecosystem source, rather than simply large vendors, is the breadth of services and partnerships they enable. Each provider maintains a marketplace of third-party integrations, partner networks, and certification programs that extend their reach well beyond their own tooling.

-

AWS, Azure, and GCP collectively serve as the compute backbone for most enterprise cloud strategies.

-

Each provider offers managed services spanning databases, AI, analytics, security, and networking.

-

Their global data center footprints enable regional compliance and low-latency performance.

APIs Enable Interoperability Across Services

Application Programming Interfaces, or APIs, are the connective channels that let different software systems exchange data and trigger actions across boundaries. In the cloud ecosystem, APIs are what make interoperability possible. Without them, every service would be an island, unable to share information or coordinate workflows.

Modern cloud architectures are fundamentally API-driven. When a mobile app retrieves user data from a cloud database, when a payment service confirms a transaction, or when a monitoring tool pulls metrics from a container cluster, an API call is what makes it happen. APIs also power the "as-a-service" model itself: Infrastructure as a Service (IaaS), Platform as a Service (PaaS), and Software as a Service (SaaS) all expose their capabilities through APIs so that developers and automation tools can interact with them programmatically.

-

REST and GraphQL are the most common API standards used in cloud-native applications.

-

API gateways manage traffic, enforce security policies, and handle rate limiting across services.

-

Well-designed APIs reduce integration friction and accelerate time to deployment.

The strategic importance of APIs is hard to overstate. Organizations that design their cloud systems around clean, well-documented APIs gain the flexibility to swap services, integrate with partners, and scale individual components without rearchitecting the entire stack.

How Stripe Uses APIs to Power Cross-Cloud Integration

Stripe, the payment platform, built its entire business around a set of APIs that developers can embed into any application. Companies running on AWS, Azure, or GCP can all integrate Stripe's payment processing without worrying about the underlying cloud provider, because the API layer abstracts the complexity. This is a clear example of how APIs enable ecosystem-wide interoperability.

To understand how organizations can use public APIs to unlock new revenue and accelerate integration, explore Top Cloud Sources Every Business Should Know.

APIs make services talk to each other. But the tools and frameworks those services are built on often come from a very different source: the open-source community.

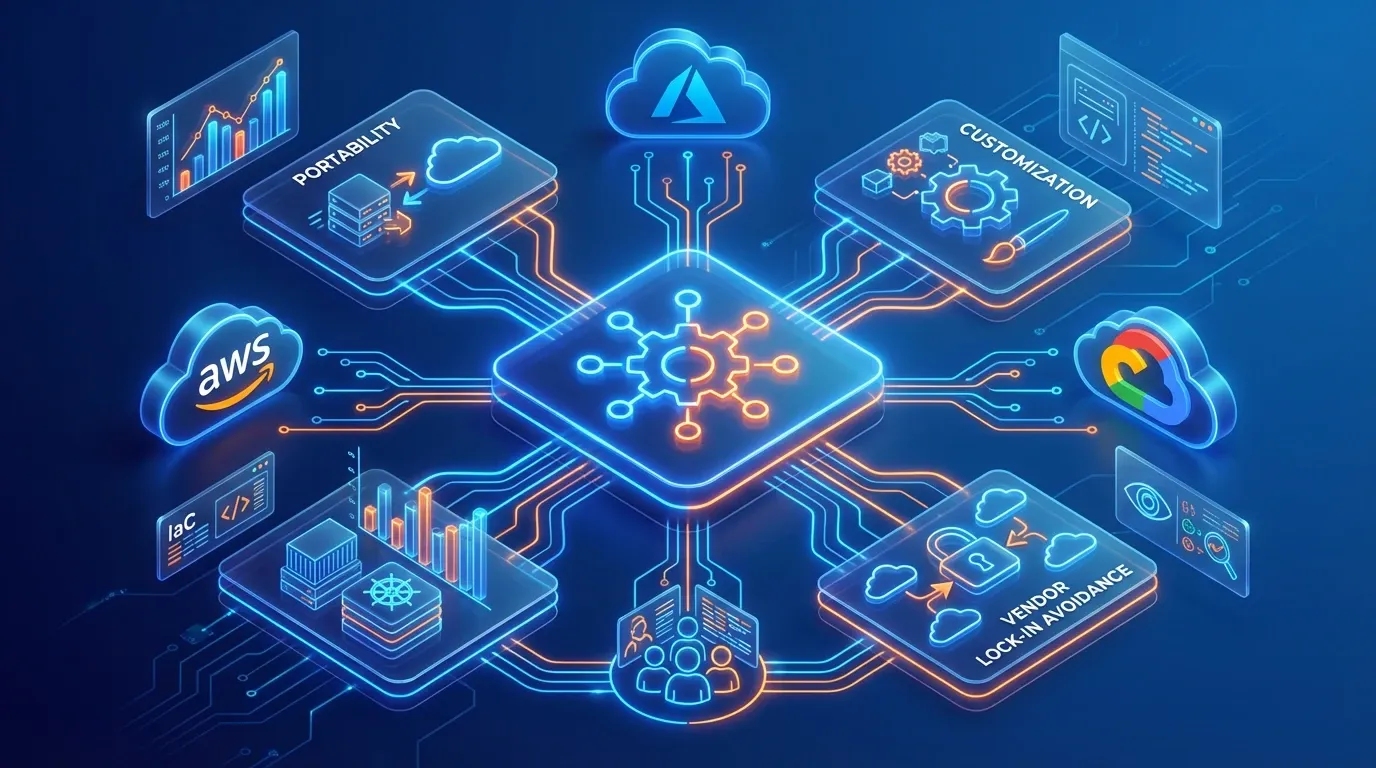

Open-Source Frameworks Power Portability and Customization

Open-source software is one of the most underappreciated sources powering the cloud ecosystem. Technologies like Linux, Kubernetes, Terraform, Prometheus, and PostgreSQL are not peripheral tools. They are foundational building blocks that run inside, alongside, and sometimes underneath the services offered by the largest cloud providers.

Open-source frameworks give organizations portability, meaning the ability to move workloads between cloud providers or run them on-premises without being locked into a single vendor's proprietary tooling. They also enable customization. Because the source code is open, engineering teams can adapt tools to fit their specific requirements rather than conforming to rigid vendor specifications.

-

Kubernetes has become the industry standard for container orchestration across all major clouds.

-

Terraform enables infrastructure as code, letting teams define and provision cloud resources in a repeatable, version-controlled way.

-

Prometheus and Grafana are widely used for monitoring and observability in cloud-native environments.

The relationship between open source and commercial cloud is symbiotic. Cloud providers contribute to open-source projects, offer managed versions of popular tools, and build proprietary services on top of open-source foundations. This creates a cycle where community innovation feeds commercial offerings, and commercial investment funds further development.

How Spotify Migrated to Google Cloud Without Vendor Lock-In

When Spotify migrated its infrastructure to Google Cloud, it relied heavily on Kubernetes to orchestrate its containerized microservices. Because Kubernetes is open-source and cloud-agnostic, Spotify retained the flexibility to customize its deployment pipelines and avoid deep lock-in to GCP-specific orchestration tooling.

To see how multi-cloud strategies rely on open frameworks and API-driven infrastructure management, consider Multi-Cloud Strategy: Building a Winning Cloud Strategy for 2026 and Beyond.

The mention of containers and orchestration brings us to the next essential layer of the cloud ecosystem, one that has fundamentally changed how software is packaged and deployed.

Container and Orchestration Technologies Standardize Deployment

Containers, most commonly built with Docker, package an application along with all its dependencies into a single portable unit. This means a containerized application runs the same way on a developer's laptop, in a test environment, and in production, regardless of the underlying infrastructure. Container orchestration platforms like Kubernetes then manage the deployment, scaling, and networking of those containers across clusters of machines.

This combination has become the operational standard for cloud-native development. It eliminates the "it works on my machine" problem and gives teams consistent, repeatable deployment workflows.

-

Docker containers provide lightweight, isolated runtime environments for applications.

-

Kubernetes automates scaling, load balancing, and self-healing for containerized workloads.

-

Service meshes like Istio add fine-grained traffic control and security between containers.

The compute demands behind container-based and AI-driven workloads are also reshaping physical infrastructure. Individual racks powering AI computing workloads now require as much as 140 kilowatts, compared to just 2 to 4 kilowatts for legacy cloud data center racks from 15 to 20 years ago, with next-generation racks expected to reach 600 kilowatts and eventually a megawatt.

How Airbnb Uses Kubernetes to Scale Millions of Users

Airbnb uses Kubernetes to manage thousands of microservices across its platform. When demand spikes during holiday seasons, Kubernetes automatically scales the relevant services up, then scales them back down when traffic normalizes. This elasticity keeps costs predictable while maintaining reliability for millions of users.

For a practical guide to deploying, managing, and optimizing containerized workloads in the enterprise, see Containerization and Orchestration Tools for Simplifying Modern Application Deployment.

Containers and orchestration handle how applications run. But the broader ecosystem also depends on platform services and automation frameworks that simplify operations at scale.

Platform Services, Automation, and Observability Complete the Picture

Platform as a Service (PaaS) offerings, CI/CD (Continuous Integration and Continuous Delivery) pipelines, infrastructure automation tools, and observability platforms round out the cloud ecosystem by making it manageable. Without these layers, operating distributed cloud environments at enterprise scale would be unsustainable.

PaaS solutions like AWS Elastic Beanstalk, Azure App Service, and Google App Engine let developers deploy applications without managing the underlying servers. CI/CD pipelines automate testing and deployment, reducing human error and accelerating release cycles. Observability tools, which combine logging, metrics, and distributed tracing, give teams real-time insight into system health and performance.

-

CI/CD platforms like GitHub Actions and GitLab CI automate the software delivery lifecycle.

-

Infrastructure automation through tools like Terraform and Ansible ensures environments are consistent and auditable.

-

Observability platforms like Datadog and Grafana provide visibility across complex distributed systems.

The energy infrastructure supporting all of this is also evolving rapidly. US data center power needs are expected to rise from between 3-4% of total US power demand today to between 11-12 in 2030. The Electric Reliability Council of Texas (ERCOT) reported a 300% year-over-year increase in interconnection requests in 2025, reflecting the massive expansion driven by AI and cloud computing. Meeting these demands at scale is expected to rely on solutions such as gas-fired plants fitted with carbon capture technology, which are among the best-placed options through 2030.

For organizations navigating this complexity, managed IT service providers can play an important role. Companies like those offering comprehensive infrastructure management, cloud computing support, cybersecurity, and technology optimization help businesses implement scalable solutions, maintain security, and bring operational discipline to cloud environments that might otherwise become unwieldy.

For practical steps on building automated, observable, and secure CI/CD pipelines at scale, check out CI/CD Monitoring: Continuous Monitoring for Performance, Security, and Compliance.

What a Mature Cloud Operating Model Looks Like

Turning the Cloud Ecosystem Into an Operating Model

When the individual layers of the cloud ecosystem work in isolation, complexity accumulates faster than value. A mature cloud operating model is what turns that complexity into a controlled, scalable system. It is built on a consistent set of operational principles: standardized deployments, governed APIs, reusable IaC modules, centralized observability, secure cloud operations, and predictable costs tied to business outcomes.

How Leading Organizations Operationalize the Cloud Ecosystem

Leading organizations do not treat the cloud ecosystem as a collection of tools. They treat it as an operating model - one that is governed, automated, and continuously optimized to deliver business value at scale.

What Truly Powers the Cloud Ecosystem

The cloud ecosystem is the interconnected network of hyperscale cloud providers, APIs, open-source frameworks, container and orchestration technologies, platform services, automation tools, and observability layers that together enable modern organizations to build, deploy, scale, and manage digital applications and infrastructure. Its strength comes not from any single source but from how these components reinforce one another to deliver scalability, integration, flexibility, and continuous innovation.

Organizations that succeed in this environment do so by understanding these interdependencies, investing in clear architecture and governance, and building the operational discipline needed to turn ecosystem complexity into lasting business value.

Conclusion

The cloud ecosystem is not a technology problem. It is an architectural discipline. Hyperscale platforms, APIs, open-source frameworks, containers, and automation tools do not deliver value on their own. They deliver value when they are understood, connected, and governed with intention. Organizations that treat the cloud as a collection of services will always be reacting. Those that understand how the layers interact will build systems that scale, adapt, and hold under pressure.

The ecosystem will keep growing in both capability and complexity. The question is not whether your organization uses the cloud. It is whether you understand what is actually powering it ż and whether that understanding is reflected in how you build, operate, and evolve your infrastructure.