Overview

CI/CD pipeline automation is no longer an engineering preference. It is an operational requirement. This article is written for engineering leaders, DevOps teams, and CTOs who manage software delivery at scale and need to understand what drives that shift, where adoption breaks down, and what high-performing teams do differently.

Manual Pipelines Create Hidden Bottlenecks

Before making the case for automation, it helps to understand what happens without it. Manual or semi-manual delivery processes introduce friction at every stage of the software delivery lifecycle. A developer finishes a feature, then waits for someone to trigger a build. A tester runs validation scripts by hand. A release engineer copies configuration files across environments, hoping nothing drifts between staging and production.

These steps might feel manageable when a team ships once a month. They become unsustainable when the business expects weekly or daily releases.

The problems compound quickly:

-

Human error increases with every manual handoff, especially under time pressure.

-

Release schedules become unpredictable because each deployment depends on individual availability.

-

Configuration drift, where environments slowly diverge from each other, goes undetected until something breaks in production.

-

Engineering teams spend hours on repetitive tasks instead of building features that matter.

The cost is not just slower releases. It is lost confidence. When deployments feel risky, teams deploy less often. Feedback loops lengthen. Bugs hide longer. DORA research consistently shows that elite teams deploy on demand, multiple times per day, while low performers ship between once per month and once every six months. Manual processes are a primary reason teams stay stuck in that lower tier.

CI/CD Automation: How CI/CD Pipeline Automation Powers Modern Software Delivery explains in detail how organizations that automate move beyond these bottlenecks to balance speed with quality, and reduce the heavy costs and risks of manual processes.

When Manual Release Processes Become the Bottleneck

Consider a mid-size SaaS company that deploys a monolithic application through a series of shell scripts maintained by two senior engineers. When one goes on vacation, releases stall. When both are available, a single deployment still takes a full afternoon. The bottleneck is not the code. It is the process around the code.

This pattern is exactly what CI/CD pipeline automation is designed to eliminate.

Faster Cycles and Earlier Issue Detection

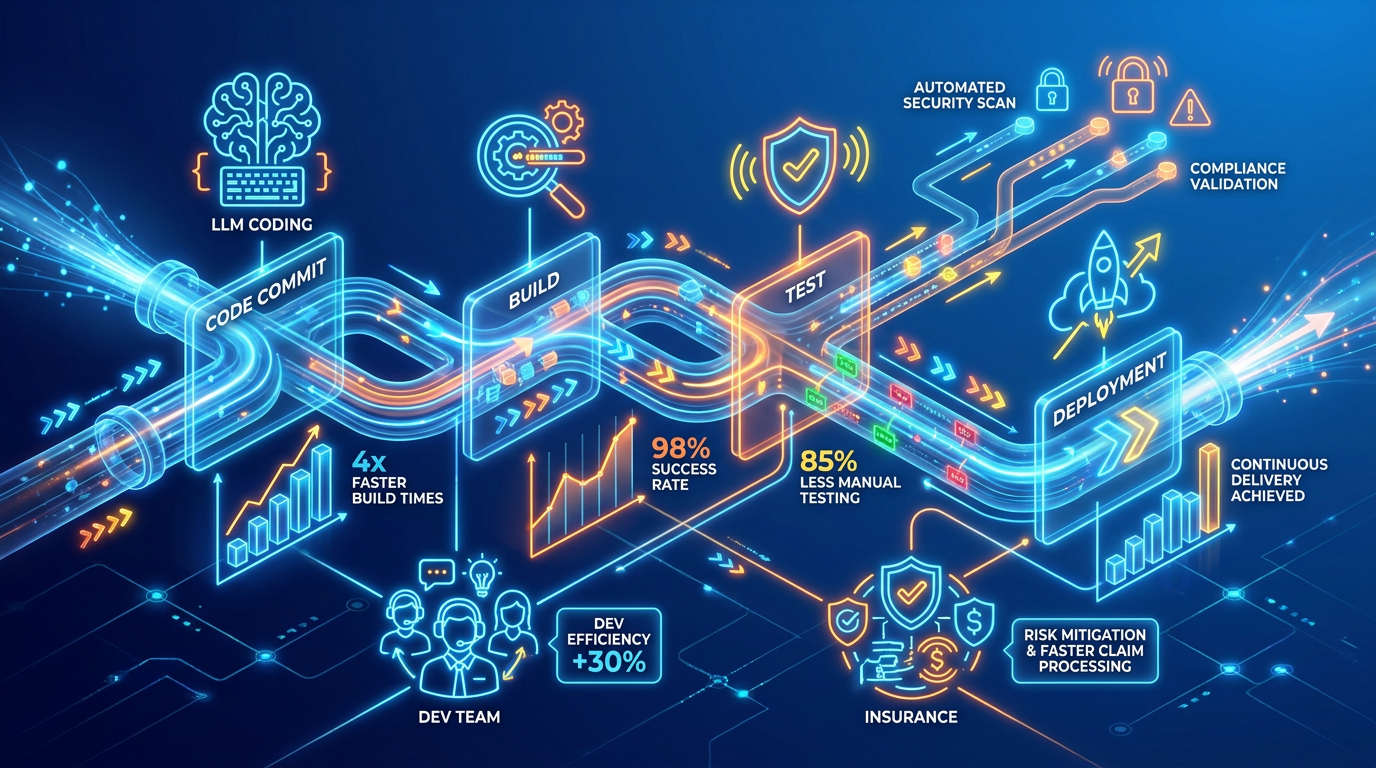

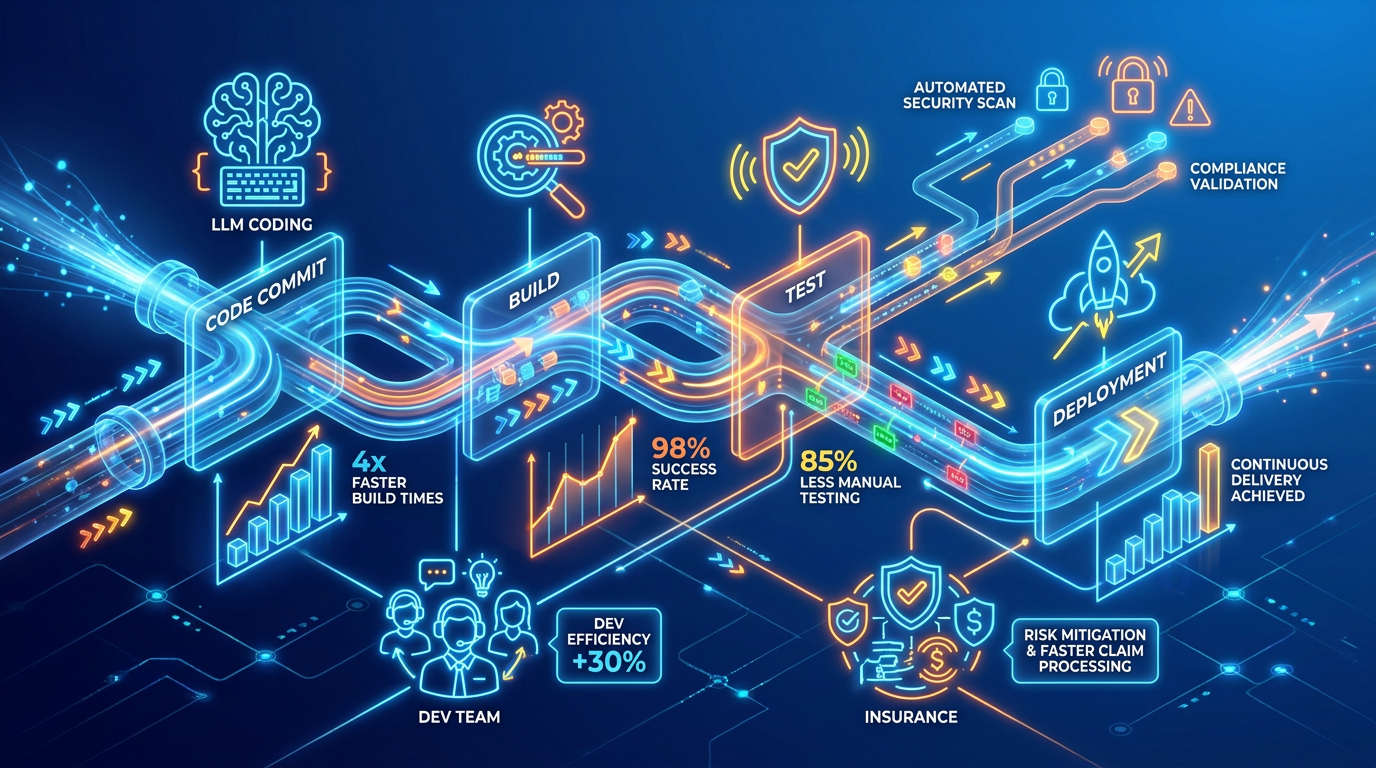

Automating the build, test, and deployment stages of a CI/CD pipeline does more than save time. It changes how quickly teams learn whether their code works. When every commit triggers an automated build and a suite of tests runs without human intervention, problems surface in minutes instead of days.

Continuous integration provides early visibility into bugs by catching issues the moment code is merged, not weeks later during a manual QA cycle. This early detection means fixes are smaller, faster, and far less expensive.

Automated testing is a major driver of this speed. Tests that once took hours or days to run manually now run in minutes, giving developers near-instant feedback on whether a change is safe to ship. Teams can measure this through metrics like test coverage percentage, execution time reduction, defect detection rate, and ROI based on time and cost savings.

The practical impact is measurable across four dimensions: delivery speed, defect cost, deployment stability, and recovery time.

-

Build-test-deploy cycles that previously took four to six hours can drop to under fifteen minutes.

-

Defects caught in CI cost roughly 10x less to fix than defects found in production, according to IBM Systems Sciences Institute research.

-

Change failure rate, the percentage of deployments that cause a production incident, drops below 15% for DORA elite performers. Manual pipelines typically run much higher.

When these cycles tighten, release confidence goes up. Teams that trust their pipeline deploy more often, and more frequent deployments reduce risk because each change is smaller and easier to roll back. Track rollback rate as a leading indicator: if you are rolling back more than 5% of deployments, your pipeline validation has gaps.

This is also where AI-generated code raises new stakes. With developers increasingly using LLM-assisted coding tools, the volume of code entering pipelines is growing faster than teams can manually review it. Automated scanning for known vulnerabilities, license compliance, and SBOM (Software Bill of Materials) generation becomes a pipeline requirement, not an optional stage. If your CI pipeline does not automatically validate what is in a build and where it came from, you are accepting supply chain risk by default.

For practical advice on using automated testing and cutting development time and costs, check out The Managed DevOps Cheat Sheet: how to cut App Development Time and Costs by 80% about devops technology.

How Continuous Delivery Automation Reduces Release Costs and Time

Hiscox Insurance offers a concrete illustration. Through continuous delivery automation, the company reduced time per release by 89%, decreased staff time to release by 75%, and cut the financial cost of a release by 97%. Those numbers represent a fundamentally different operating model for software delivery.

Consistency, Governance, and Guardrails at Scale

One of the most valuable but frequently overlooked benefits of CI/CD automation is that it enforces repeatable, auditable workflows across every release. The same pipeline runs the same steps, in the same order, every time. No shortcuts. No forgotten steps.

This consistency matters enormously for teams managing cloud-native applications across multiple environments. Automated pipelines improve agility by enabling organizations to respond quickly to changes while delivering work through standardized, repeatable processes. That repeatability also strengthens deployment governance, giving leaders a clear audit trail of what was deployed, when, and by whom.

Modern tooling goes further. Declarative CI/CD tools like Terraform, ArgoCD, and FluxCD now enable versioning, traceability, and rapid reproducible deployments across hybrid environments, ensuring that infrastructure and application configurations stay in sync regardless of where workloads run. The strongest teams now codify their deployment policies directly into pipeline definitions using policy-as-code frameworks like Open Policy Agent (OPA) or Kyverno. This means a pipeline can block a deployment that lacks a valid SBOM, deploys an unscanned container image, or violates environment-specific resource constraints, all without a human gatekeeper.

Automated guardrails matter as much as automated deployments. Drift detection that continuously compares running infrastructure against its declared state, rollback triggers that fire when error rates spike post-deploy, and canary analysis that evaluates traffic against baseline metrics before promoting a release: these are what separate a fast pipeline from a safe one. Measure drift rate weekly. If more than 2-3% of your resources have drifted from their declared state, your automation is deploying but not enforcing.

For organizations working with managed IT service providers like ABS, which specializes in infrastructure management, cloud computing, and business technology solutions, this kind of structured automation aligns naturally with broader cloud operating models built for reliability and scale. See how Managed IT Services can support scalable delivery, offloading operational burdens while keeping teams focused on feature delivery.

How Declarative Pipelines Eliminate Configuration Drift

A financial services firm operating across AWS and on-premises data centers adopted declarative pipelines to manage deployments in both environments from a single source of truth. Configuration drift dropped to near zero, measured by weekly drift audits against their Terraform state files. Their compliance team could trace every production change back to a specific code commit. Automation did not just speed things up. It made the entire delivery process auditable.

But automation introduces its own problems. The next question is what actually goes wrong when teams adopt it.

What Goes Wrong: The Real Friction of Adopting Pipeline Automation

Pipeline automation is a foundational requirement for high-performing technology teams. But it is not the right starting point for every organization. Teams with fragile legacy monoliths, highly manual approval workflows, or broken release processes will not fix those problems by adding automation on top. The pipeline will inherit the dysfunction. For teams ready to move forward, the friction usually comes from three directions.

-

Ownership shifts, and nobody plans for it. In a manual environment, release engineers or operations teams typically control deployments. In an automated environment, the pipeline itself becomes code that requires long-term ownership and maintenance. Too often, nobody owns it. Pipeline YAML files accumulate across repositories without clear maintainers, gradually becoming outdated and unreliable. The most common failure mode is not a broken pipeline. It is an abandoned one.

-

Platform engineering teams are increasingly solving this problem by building internal developer platforms with standardized “golden path” templates that development teams consume instead of building pipelines from scratch. Learn more in The Next Frontier: DevOps for Cloud-First Enterprises.

-

Test suites become the new bottleneck. A CI/CD pipeline only moves as fast as its slowest validation stage. Teams that attach legacy testing frameworks to modern CI/CD environments often create pipelines that take 45 minutes or longer to validate a single change. Developers eventually stop waiting for feedback and begin bypassing process controls. Strong testing discipline, including optimized unit, integration, and contract testing, becomes essential for keeping delivery cycles efficient.

-

Teams should actively monitor pipeline execution time. If it takes longer than 15 minutes to move from commit to validated build, developers will usually start creating workarounds outside the intended process.

-

Monitoring gaps create false confidence. A successful deployment workflow does not guarantee a healthy production environment. Without observability into live systems, organizations simply replace one form of operational blindness with another. Mature CI/CD environments integrate:

-

High-performing teams also track MTTR (Mean Time to Recovery) as a primary operational metric. DORA elite performers recover from incidents in under one hour. If recovery takes days, the deployment process likely ends too early and lacks sufficient operational visibility.

-

Building stronger observability into the deployment lifecycle is explored further in CI/CD Monitoring: Continuous Monitoring for Performance, Security, and Compliance.

-

The trade-offs are real. CI/CD pipeline automation improves speed, repeatability, and governance, but it also introduces new operational dependencies. Organizations take on the maintenance burden of pipeline-as-code systems and become increasingly dependent on CI/CD platform availability. When the CI platform fails, delivery stops across the organization. Resilience planning for automation infrastructure becomes just as important as resilience planning for production systems.

What is CI/CD pipeline automation? CI/CD pipeline automation is the practice of using software tools to automatically build, test, and deploy code changes through a defined series of stages, from code commit to production, without requiring manual intervention at each step. It reduces human error, speeds up delivery, and creates repeatable, auditable release processes.

Conclusion

CI/CD pipeline automation is no longer just a developer productivity upgrade. It has become a core operational requirement for organizations shipping software at scale. As AI-assisted development accelerates the volume of code entering pipelines, the cost of manual processes compounds faster than most organizations expect.

But pipeline orchestration alone is not enough. High-performing teams combine automated delivery with strong ownership, observability, governance, and standardized operating models. The goal is not simply to deploy faster. It is to create a delivery system that is reliable, repeatable, secure, and resilient under pressure.

Most teams move through a predictable progression: manual pipelines → basic automated CI → governed CI/CD → platform-led delivery with observability and rollback automation. Knowing where you are in that progression is the fastest way to identify what to fix next. The organizations moving fastest today are not necessarily the ones writing the most code.They are the ones building deployment processes capable of safely handling continuous change.