What You’ll Learn

You will walk through the four essential stages of a modern CI/CD pipeline - Source, Build, Test, and Deploy - and see how popular platforms such as GitHub Actions, Jenkins, and GitLab CI fit naturally into each step. The focus is on common, tool-agnostic patterns rather than a single technology, so the concepts apply whether you run a small service or a growing production system.

Short YAML snippets and real-world examples illustrate how teams automate builds, validate changes through testing, and deploy updates safely. Where relevant, the guide references industry research to anchor each step in proven practice. By the end, you will have a clear mental model of the CI/CD workflow, a starter configuration you can adapt to your own environment, and the confidence to expand the pipeline gradually without putting production at risk.

What Is a CI/CD Pipeline?

A CI/CD pipeline is an automated workflow that begins when code is committed to version control and continuously moves changes through build, test, and deployment stages. In simple terms, this explains the CI/CD meaning: a structured process where code is automatically integrated, validated, and delivered to production environments. The pipeline produces versioned artifacts, validates them through automated checks, and deploys them to staging or production environments in a repeatable and auditable way.

By reducing manual steps and enforcing consistent processes, a CI/CD pipeline shortens feedback loops, lowers deployment risk, and enables teams to release software more frequently and reliably.

Step 1. Put Everything in Source Control

A pipeline starts long before any container spins up. The first requirement is that all code, configuration, and even documentation live in a version-controlled repository, creating a single source of truth that enables automation, review, and traceability from the very first commit.

Begin by creating or selecting a Git repo.

-

Push your application source.

-

Commit Dockerfiles, Kubernetes manifests, Terraform scripts, or Ansible playbooks alongside the code.

-

Add a dedicated folder (often .github/workflows, .gitlab-ci, or jenkinsfile) for pipeline definitions.

Keeping pipeline code next to application code removes the “works on my machine” excuse, lets reviewers see every change, and provides a single audit trail.

Once everything is in Git, create a protected main branch. Only reviewed pull requests (PRs) or merge requests (MRs) should reach it. This small guardrail stops flawed changes from entering the rest of the pipeline.

Git acts as the single truth, so the next stages can trigger automatically whenever the main branch changes.

For a deeper perspective on moving from ad-hoc chaos to a structured DevOps model, see From Code to Customer: Accelerating Innovation with Cloud DevOps

How Source Control Improves Deployment Reliability

A fintech startup moved its Terraform state files into the same GitLab project as the microservice code. When a developer proposed a schema change, the merge request showed both the SQL migration and the related infrastructure update, making reviews clearer and reducing failed deployments.

With the repository structured this way, each merge becomes a reliable trigger for automation. The codebase is ready to move beyond version control and into execution: a branch merge now kicks off the build stage, where commits are translated into consistent artifacts.

Step 2. Automate the Build

The build stage transforms raw source into a runnable artifact: a compiled binary, a JAR, or a container image. In mature pipelines, this stage also applies to infrastructure definitions. When Terraform, Pulumi, or CloudFormation files change, the pipeline should generate an infrastructure plan and surface it for review alongside application changes.

During this step, dependencies are resolved, code is compiled or packaged, and outputs are prepared in a consistent, repeatable form that can be tested and deployed across environments: a compiled binary, a JAR, or a container image. Dependencies are resolved, code is compiled or packaged, and the output is prepared in a consistent, repeatable form that can be tested and deployed across environments.

Choose a runner:

-

GitHub Actions: free minutes for public repos and easy marketplace actions.

-

GitLab CI/CD: built-in Docker executor that spins up disposable containers.

-

Jenkins: self-hosted freedom and plugins, still “a critical part of the IT infrastructure” for many teams, as Sacha Labourey notes.

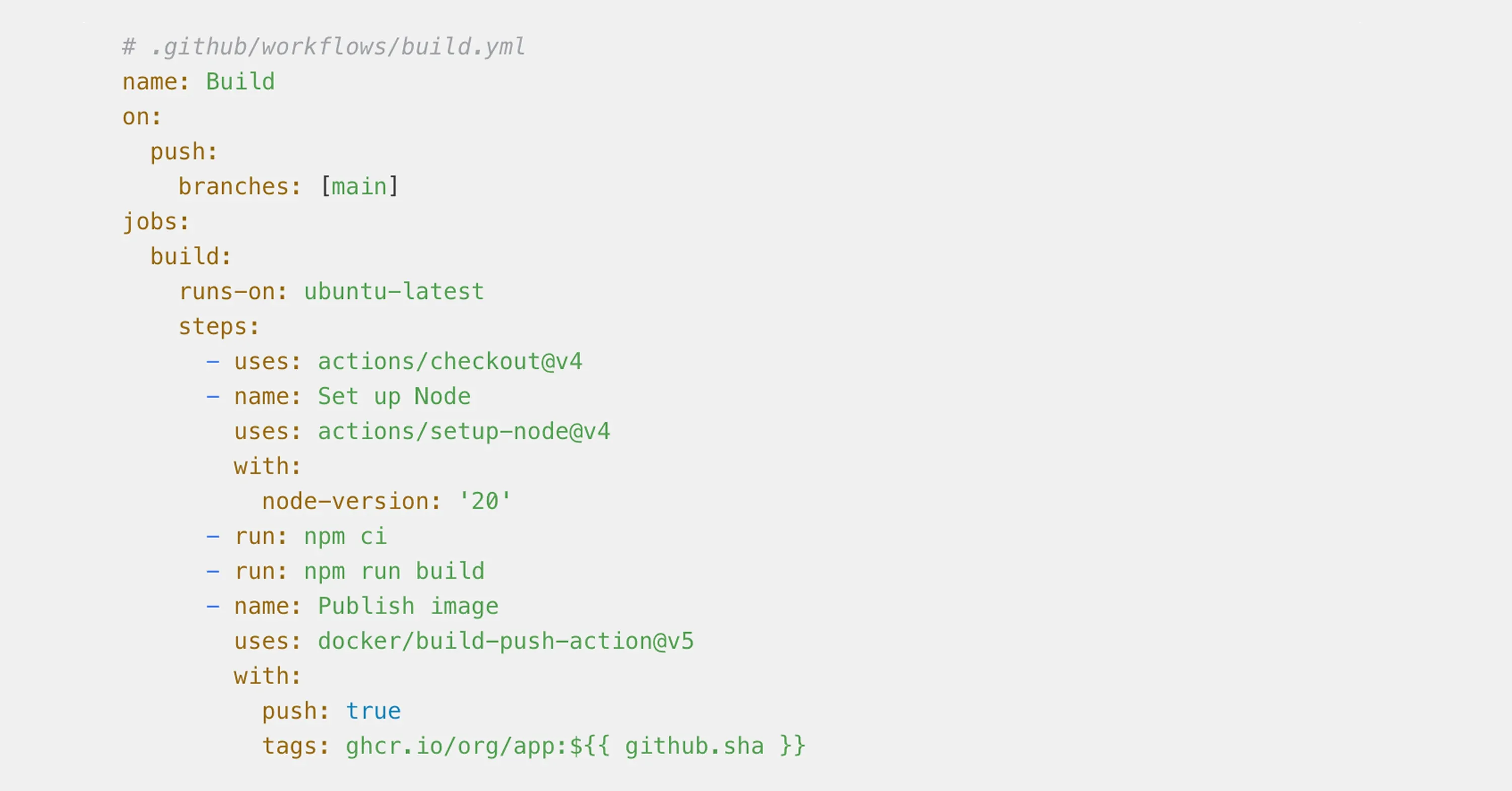

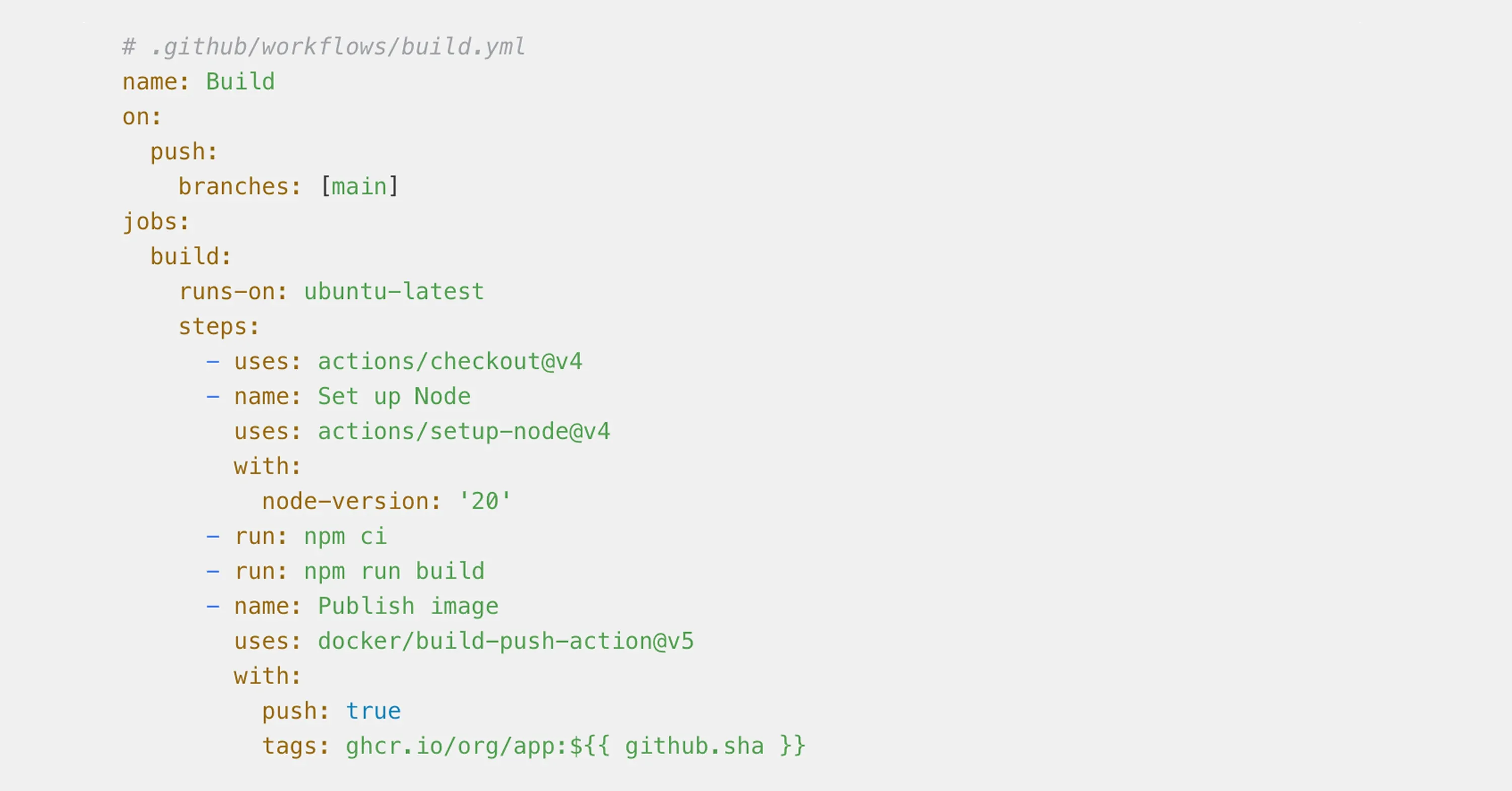

A minimal GitHub Actions workflow might look like this:

Example configuration for illustration purposes. Adapt to your stack and security requirements.

Notice a few key patterns:

-

Each job is isolated and reproducible, running in a fresh container.

-

Secrets (such as registry credentials) live in the platform’s vault, never hard-coded.

-

The artifact carries the commit SHA, linking binary to source.

End the build stage by saving the artifact into a single, well-defined source of truth, such as a container registry or artifact repository. This repository becomes the authoritative location for all build outputs and enforces access control, retention, and auditability.

Follow the immutable artifact principle: the exact same binary or image that passed CI tests and staging validation must be promoted to production without rebuilding. Promotion should happen by reference (tag or digest), not by rerunning the build, to avoid hidden drift between environments. Many newcomers worry about performance. A zipped build folder may weigh several hundred MB, yet caching dependencies between runs usually cuts build time in half.

For more about building robust automation layers and the business case for managed pipelines, see Cloud Services and DevOps

The build artifacts now exist, ready for inspection by the test stage.

How Build Caching Cuts CI Time at Scale

A gaming studio running large Unreal Engine builds was facing 45-minute build times on every commit, creating long feedback loops for developers. After migrating the workflow to GitLab CI and introducing a persistent build cache, average build duration dropped to 18 minutes.

That 27-minute reduction compounded quickly. With roughly 40 commits per day, the team reclaimed several hours of engineering time daily, shortening iteration cycles and reducing context switching. Faster builds meant developers could validate changes sooner and move on, instead of waiting on pipelines to finish.

Step 3. Add Continuous Testing

Fast feedback is the soul of CI. The test stage executes unit tests, integration suites, linters, or security scans against the artifact built in step 2, ensuring that defects are detected early and only validated changes move further down the pipeline.

Organize tests into layers so failures surface as soon as possible:

-

Unit tests in seconds

-

Static analysis and linters in under two minutes

-

Database or API integration tests in under five

-

End-to-end UI tests, often parallelized to stay under ten

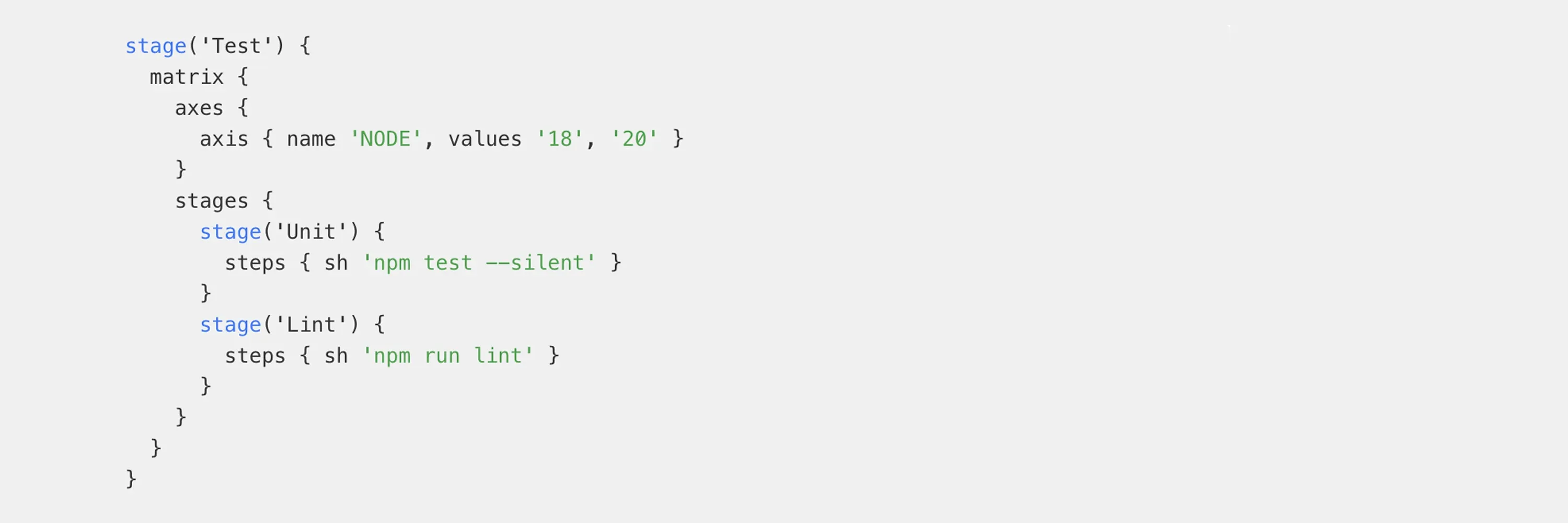

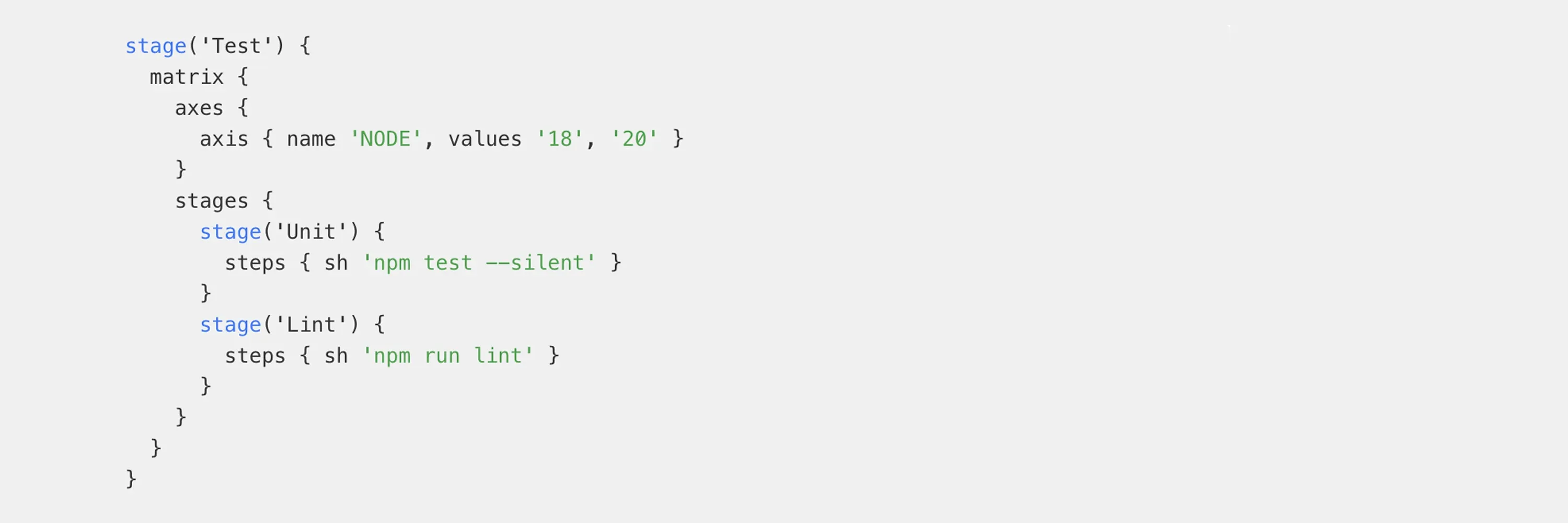

Here is a Jenkinsfile snippet that shows the split:

Example configuration for illustration purposes. Adapt to your stack and security requirements.

For cloud pipelines, attach a coverage report or test summary artifact so pull-request reviewers see what passed. Effective pipelines fail fast. A single red test cancels the downstream steps, sparing compute cost and developer time. Data from the CD Foundation shows that 83% of developers are now involved in DevOps-related activities, yet the same report highlights tool sprawl. When 32% of organizations juggle two CI/CD tools and 9% use at least three, context switching can hide flaky tests. Keep all tests visible in one dashboard if possible.

If you want to discover practical workflows to automate testing and delivery, check out The Managed DevOps Cheat Sheet: how to cut App Development Time and Costs by 80% about devops technology. A clean test result means you can deploy with confidence.

How Automated Testing Enables Safer Peak-Time Releases

An e-commerce retailer added mutation testing to its GitHub Actions pipeline to strengthen test coverage and expose hidden gaps in critical business logic. While the tests added a small amount of execution time, they significantly increased confidence in each release.

As a result, the team was able to shorten the manual QA window and ship bug fixes even during peak holiday traffic, without increasing rollback risk. With the artifact fully validated by the pipeline, it could now move forward with confidence. Deployment became the final, controlled step rather than a leap of faith.

Step 4. Configure Continuous Deployment

Continuous deployment (CD) promotes the artifact to staging or production through repeatable scripts, applying the same steps on every release to reduce manual errors and ensure consistent behavior across environments. When a release includes infrastructure changes, the pipeline should apply those updates first using Infrastructure as Code, then deploy the application onto the updated environment.

Pick a strategy:

-

Rolling update: replace pods gradually, common in Kubernetes.

-

Blue-Green: run two environments, then switch traffic.

-

Canary: direct a fraction of users to the new version.

A minimal GitLab gitlab-ci.yml for Kubernetes could be:

Example configuration for illustration purposes. Adapt to your stack and security requirements.

Many teams start with a manual approval gate, flipping to full automation later. This is especially important when a release includes database migrations. Schema changes should be version-controlled and executed as a dedicated pipeline step using migration tools such as Flyway or Liquibase, rather than applied manually.

Because databases are harder to roll back than code, teams often design migrations to be backward-compatible, allowing new and old application versions to coexist safely during rollout. The confidence you gain from stable tests will nudge you toward hands-free releases. Security should ride shotgun. Inline scans or signing tools such as Cosign can attach metadata, proving the artifact’s origin. Regulatory auditors love this paper trail. Finally, add a rollback step. Rollbacks should be triggered automatically based on post-deployment monitoring, not manual intervention. By integrating tools such as Prometheus, Datadog, or New Relic, the pipeline can detect error-rate spikes or latency regressions immediately after release and automatically redeploy the previous stable version. Automation fails gracefully only when rollbacks are as scripted as deployments. For more on release automation and deployment patterns, see Tech-Driven DevOps: How Automation is Changing Deployment.

A reminder from JetBrains: Teams can spend up to 50% of their effort maintaining CI/CD tooling instead of delivering new value. To avoid this, successful teams track pipeline performance using DORA metrics: deployment frequency, lead time for changes, change failure rate, and mean time to recovery (MTTR). These metrics turn CI/CD from a subjective feeling into a measurable delivery advantage.

Handling Database Changes Safely: The Expand–Migrate-Contract Pattern

To avoid downtime and irreversible failures, mature CI/CD pipelines treat database changes as a multi-step evolution rather than a single deploy-time action. A common and proven approach is the expand–migrate-contract pattern.

First, the schema is expanded in a backward-compatible way, for example by adding a new nullable column or table without removing existing structures. The application is then deployed with logic that can work with both the old and the new schema versions.

Next, a migration or backfill step runs as a controlled pipeline job, gradually moving or transforming existing data to the new structure. Only after the application has been running successfully on the new schema for some time does the final contract step remove obsolete columns or tables.

This approach allows old and new application versions to coexist during rollout, minimizes risk, and makes database changes predictable and auditable - a critical requirement for continuous delivery in production systems.

Conclusion

ou now have a clear blueprint: commit everything to Git, version infrastructure and database changes alongside code, promote immutable artifacts through environments, and deploy with automated monitoring-driven rollbacks. Whether you implement this workflow using GitLab CI/CD, GitHub Actions, or another platform, the principles remain the same: let a runner build consistent artifacts, execute layered tests for instant feedback, and release through scripted deployments with rollback baked in. Adopt one stage at a time, measure confidence, and iterate. If maintaining infrastructure distracts from shipping features, a leading provider of managed IT services can host and monitor the pipeline for you, letting your team focus on code.

To explore how this model supports scalable delivery, see Managed IT Services

A well-tuned GitLab CI/CD pipeline is not optional anymore - it is the standard that keeps pace with user expectations and competitive markets. Start building yours today, one stage at a time, and spend your energy on features, not firefighting.